All of us, at one point or another, have experienced the dreaded screen tear. It’s that horrific moment when a great diagonal line cuts your image, making it look like Norman Bates took his kitchen knife to your game. Nvidia, being the graphics boffins they are, set out to banish that, along with stutters and other graphical ailments, for good. The result: the Nvidia G-Sync monitor. It actually sounds kind of amazing.

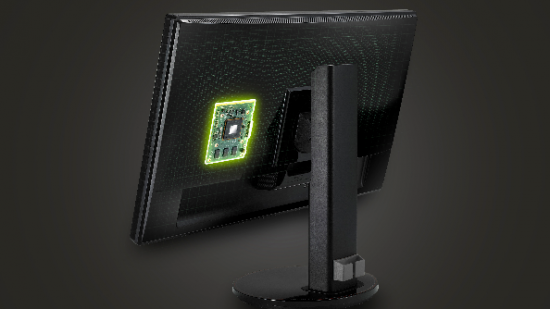

The G-Sync is a new little chip that will sit inside monitors and – presumably through mystic voodoo – ensure that any issues to do with the signal between your graphics card and monitor are removed entirely. Various monitor manufacturers are already at work on models that support up to 4K resolutions, and will be on the market next year. Those who can’t wait that long though will soon be able to buy the actual G-Sync chip itself and DIY install it into an ASUS VG248QE monitor. There’s no word on the price for either the module or full monitors, but if the result is as good as Nvidia claim, then this could be the next essential element of your battle station.

For those not in the know of why G-Sync is needed, let us at PCGN be your guide to why screen tearing happens. You see, back when TVs were first discovered in a lab, the refresh rate on their images was made at 60Hz. Fun fact: this is because the American power grid ran at 60Hz AC power, so it made developing TV easier if they stuck to that. Since TV was invented, no one has really bothered to try and make anything but 60Hz standard, so everything is geared around this refresh rate.

The big problem when it comes to computers then is that graphics cards don’t render at a fixed speed, meaning refresh rates are useless. Turn on a frame rate counter and you’ll see that that rate bounces all over the place. So when your GPU sends a signal to your monitor, it’s sending a varying rate to something that only displays a fixed rate. The result is screen tearing, as your GPU sends multiple images to your monitor, even though your monitor hasn’t finished refreshing yet. The fix has generally being to implement VSync, which you’ve probably seen in the settings menu on most games. This delays your GPU signal to allow your monitor to refresh the image. However, if your GPU drops to a frame rate below the monitor’s refresh rate, then you get stutter. VSync also causes latency and input lag. All things we don’t want. Hence, NVidia’s G-Sync could be a pretty big deal.

John Carmack, Johan Andersson, Tim Sweeney and Mark Rein have all seen this new gear working, and have commented with approval. The biggest leap since HD? We’ll just have to wait and see.

You can find out more on the hard specs of G-Sync technology at the Nvidia GeForce website.