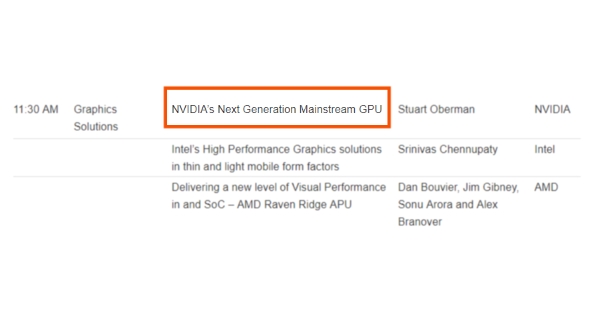

It looks like Nvidia’s next-generation graphics card, whether GTX 1180 or GTX 2080, will at least be announced by August 20, when the Hot Chips Symposium rolls around. The program has now been released for the event with all the big names in attendance, and the talk that’s caught our attention is the one by Stuart Oberman titled: ‘NVIDIA’s Next Generation Mainstream GPU’ in the Graphics Solution slot on the Monday.

The title has obviously been kept deliberately vague so we don’t get any early hints as to what the new gaming-focused Nvidia graphics cards are called, or what architecture they’re going to be powered by.

Check out all the rumours, news, and guesswork about Nvidia’s GTX 1180.

Given that Oberman’s Hot Chips talk will likely go into some detail about the new GeForce GPU it seems pretty likely that it will either be announced before then, or we might possibly even see them released by August 20. We’ve heard that Gamescom will be a big deal for Nvidia, and that falls just a day later…

Hot Chips issued a press release yesterday which details the great and the good of PC gaming technology representing at the event and Videocardz picked up the details of the talk.

“We will hear from the CPU and GPU giants: AMD featuring their next-gen client chip, NVIDIA with their next-gen GPU, and Intel with an interesting die-stacked CPU with iGPU plus stacked dGPU.”

That could mean we’ll also hear more about Threadripper 2 – which is set for an August launch – and we might even get some more details about what Intel is up to with its discrete GPU. If Intel is stacking its discrete graphics silicon that could make for some serious performance, even if it just used its existing architecture.

But this is the first concrete evidence from Nvidia that it’s definitely going to be shipping a new gaming GPU this summer, and that’s great news for everyone. Except AMD. And those of us with limited cash reserves…

Oh lord, it’s going to be horrifically expensive, isn’t it?