AI may not seem all that smart right now – just look at Microsoft’s Twitter bot that went all racist to those robots that collapsed when trying to open doors – but one day, sci-fi novels assure us, they will overtake our feeble human minds. Human- The Singularity Project is about one such AI.

For other, less science-y games, here’s a list of the best indie games around.

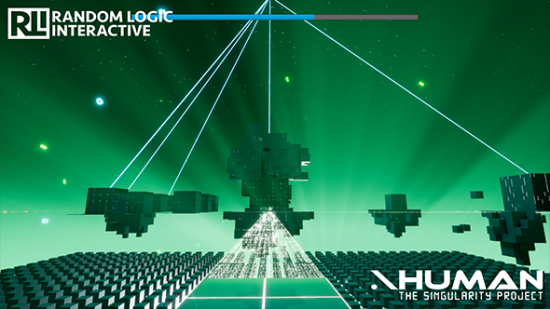

Originally part of Developing Beyond, the competition set up by Epic Games and the Wellcome Trust, Human – The Singularity Project made it to the semi-finals. Its developers, Random Logic Interactive, are now continuing work on the project outside of the contest.

You play as an AI that has become aware of its existence as an experiment in a researchlab. You manage to gain access to the company network and, developer Jimmy Lotare tells me via email, become “motivated to break free using social engineering and hacking.” As it reads data it will also become formed by the opinions and actions of others.

Depending on what information you find while exploring the company archives, the AI will grow in different ways, formed by the “opinions and actions of others.” This takes the form of the machine’s directives.

As part of the Developing Beyond competition, Random Logic Interactive got access to a number of scientists and researchers to talk about the central concepts of their game. Lotare tells me the team spoke with ethical and technical researchers at Oxford University about the “potential issues that might arise as AI develops,” things like ethical priorities – if an AI is asked to choose between saving one life and another, how does it weigh up which is the more worthy life?

However, Lotare says that the main collaboration was a with a psychologist: associate professor Niclas Kaiser of University of Umea in Sweden. He advised on something called ‘mutual co-regulation’. It’s the science of the changing relationship between people during conversation. This has informed how a lot of the dialogue was written.

It all sounds like a fascinating dive into how machines may view people when they do eventually become self-aware. God, they’re going to hate us, aren’t they?