Intel Gen11 and next-gen graphics will support integer scaling following requests by the community. Intel’s Lisa Pearce confirmed that a patch will roll out sometime in August for Gen11 chips, adding support for the highly-requested functionality in the Intel Graphics Command Center, with future Intel Xe graphics expected to follow suit in 2020.

Enthusiasts have been calling out for the functionality for quite some time, even petitioning AMD and Nvidia for driver support. Why, you ask? Essentially integer scaling is an upscaling technique that takes each pixel at, let’s say, 1080p, and times it by four – a whole number. The resulting 4K pixel values are identical to their 1080p original values, however, the user retains clarity and sharpness in the final image.

Current upscaling techniques, such as bicubic or bilinear, interpolate colour values for pixels, which often renders lines, details, and text blurry in games. This is particularly noticeable in pixel art games, whose art style relies on that sharp, blocky image. Other upscaling techniques, such as nearest-neighbour interpolation, carry out a similar task to integer scaling but on a more precise scale, which can similarly cause image quality loss.

But 4K screens are becoming commonplace in today’s rigs – especially in the professional space where integrated graphics rule supreme. Today’s top gaming graphics tech, the RTX 2080 Ti, manages to just about squeeze by dealing with 8,294,400 pixels all at once, but it’s far from a perfect, one-size-fits-all resolution just yet.

Read more: These are the best motherboards for gaming

As such, it’s often nice to have the ability to drop down the resolution every once in a while, take a load of your GPU, and do so without sacrificing fidelity. That’s where integer scaling comes in.

Our response to integer scaling @IntelGraphics pic.twitter.com/ggsLvpRMMf

— Lisa Pearce (@gfxlisa) June 24, 2019

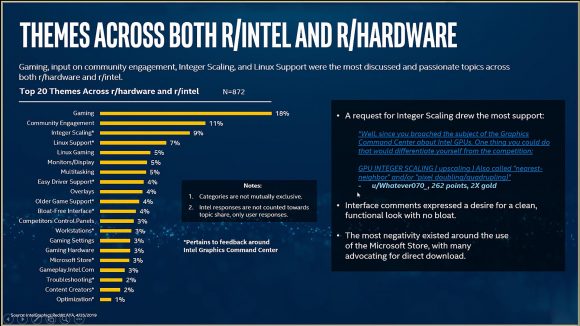

Intel is certainly taking a community-focused approach with its graphics card development, which is as much of a software project as it is a hardware one – maybe even more so. We recently spoke with marketing lead, Chris Hook, about the discussion Intel generates with Reddit AYA and community feedback and who ends up with this information.

“So a lot of a lot of discussion about integer scaling,” Hook tells us. “So every time this stuff comes up, these get widely circulated. We invest a lot of money in building these reports. So they go to all the engineering leaders.

“Then we go on a little bit of a tour on them as well internally and we share them. The engineers love this stuff. They’re really really interested. They often delve into them, try to anticipate what we’re going to come in and talk about, what they think the community’s interested in. So these drive a lot of discussion”

But there is bad news for the largest Intel install base right now: Gen9 graphics won’t support integer scaling. The hardware in most desktop and mobile chips pre-Ice Lake does not support nearest-neighbour algorithms, Intel says, and so this functionality will be limited to the latest 10nm processors later this year and Intel’s discrete graphics card, Intel Xe, when it arrives next year.

You will be able to apply the new upscaling technique from within the Intel Graphics Command Center at the end of August following a driver update from Intel.