Intel has released a new neuromorphic system, Pohoiki Beach, which attempts to mimic, as best silicon can, the vast interconnectivity of the most complex structure in the universe: the human brain. What should we apply this tech to first? Foosball for one, but most importantly creating smarter, better human prosthetics.

The new system is comprised of two Nahuku boards, each one equipped with up to 32 Loihi chips. Each chip designed to replicate the way animal brains operate, albeit to a lesser degree, with silicon replacing both neurons and synapses just like the real deal. We’re still a little off the 16,000,000,000 neurons of the human brain, but in total the new Pohoiki system could be fitted with up to 8 million “neurons” with the ability to operate many neural network topologies.

Over at the Telluride Neuromorphic Cognition Engineering Workshop, researchers demonstrated Loihi systems powering various AI implementations. Crucially, it has proven itself useful in powering greater adaptive capabilities within the AMPRO prosthetic leg, allowing this prosthetic to better adapt to kinematic disturbances.

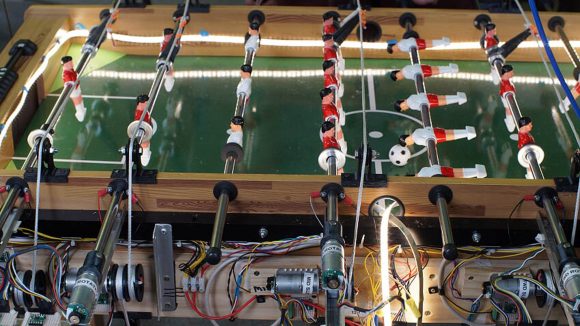

The chip also proved itself a dab hand at foosball in a demonstration of neuromorphic compute capability. Using information from event-based cameras, researchers managed to automate an entire foosball game… for science, of course.

Traditionally graphics cards have been the esteemed go-to tech for deep learning due to their power efficient parallelism. This has made for a burgeoning market that AMD and, perhaps most of all, Nvidia have wholeheartedly embraced in recent years. But Intel still wants a slice of the pie, and it’s been pursuing alternatives adjacent to CPU technology to corner its own piece of the market. Loihi is perhaps the most atypical solution of the lot, but let’s not forget Intel is in the process of developing its own rival GPU tech for this very purpose, too.

Intel is touting a 1,000 times speed up in information processing performance over a CPU, and 10,000 times more efficiency in specialised compute tasks. Those numbers are awfully amorphous, however. Still not much in way of specifics, we can just about glean some idea of this system’s power efficiency thanks to Chris Eliasmith, professor at the University of Waterloo.

“With the Loihi chip we’ve been able to demonstrate 109 times lower power consumption running a real-time deep learning benchmark compared to a GPU, and five times lower power consumption compared to specialized IoT inference hardware,” Eliasmith says. “Even better, as we scale the network up by 50 times, Loihi maintains real-time performance results and uses only 30 percent more power, whereas the IoT hardware uses 500 percent more power and is no longer real-time.”

Non-brainy silicon: These are the best CPUs for gaming

Intel is touting its tailored deep learning system as a bespoke answer to deep learning workloads. Taking the place of general purpose chips – such as your regular gaming chip CPU – the company believes that as process node scaling slows, specialised architectures, or ASICs (application-specific integrated circuits), will become a necessity in continuing the progression of Moore’s Law.

Intel’s Loihi chip is produced on the 14nm process node. The company is yet to confirm whether it will rush to adopt the 10nm or 7nm process node for this processor, both of which are expected to bear the brunt of Intel’s manufacturing over the next half decade, but it seems unlikely to be a priority for this architecturally diverse processor.

Intel is planning to roll out a scaled up system called Pohoiki Springs intended to deliver further performance enhancements and efficiency for neuromorphic workloads later this year.