Nvidia has launched a brand new graphics card: the Tesla T4. The Turing GPU within the T4 is a slightly cut-down version of the chip Nvidia’s RTX 2080 will soon launch with, albeit with a focus on power-saving. As the Tesla name suggests, it’s not built for gaming, it’s built with one specific task in mind: AI inference.

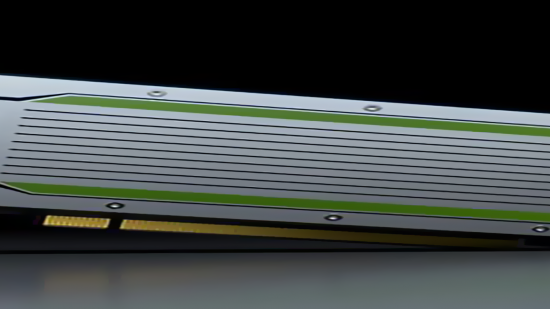

Within the Tesla T4’s PCIe form factor there’s a Turing GPU fitted with 2,560 CUDA cores. The T4 comes with 320 Tensor Cores – the cores responsible for the brunt of AI computation – and, if we do a little quick-fire maths, we can assume that’s just a little less than the RTX 2080‘s 2,944 CUDA and estimated 368 Tensor Cores. Due to the stringent 75 watt power envelope for cost-effective data centre use, the T4 will likely also featured stunted clock speeds compared to its GeForce compadre, too.

There is one front, however, where the T4 bests its RTX counterparts – and that’s memory. The T4 comes with 16GB of GDDR6, offering up 320GB/s of bandwidth, which is double the capacity of the RTX 2080’s 8GB, and still a hefty improvement on the RTX 2080 Ti’s 11GB of GDDR6.

Memory doesn’t come cheap, and neither does Nvidia’s platform support. We don’t have a confirmed price yet. But, while it may be a little less hearty in terms of spec than the RTX 2080, set to launch at $699, the Tesla T4 is sure to cost quite a considerable amount more.

Why pay the premium, you ask? Well, it’s not all down to hardware and specs. Unfortunately for all those AI inference-dependent businesses out there, they also need access to Nvidia’s extensive libraries of drivers, engines, containers, and other useful tools and frameworks necessary to get the most out of these professional GPUs – which cost a pretty penny. Specifically, that’s the Nvidia TensorRT Hyperscale Platform for inference workloads.

“Our customers are racing toward a future where every product and service will be touched and improved by AI,” Ian Buck, VP and GM of accelerated business at NVIDIA, says. “The NVIDIA TensorRT Hyperscale Platform has been built to bring this to reality — faster and more efficiently than had been previously thought possible.”

But Nvidia believes this card will be well-placed, and worth it, for a market that the green team suspects will, over the course of the next five years, reach $20 billion in value. That’s probably why AMD is equally as obsessed about breaking into this lucrative market with its own Radeon machine learning cards, soon to be joined by the first 7nm GPU, the upcoming Vega 20.

And the market seems eager to lap up the Tesla T4 already. Nvidia’s press release lists company after company – Microsoft, Google, Cisco, Dell EMC, Fujitsu, HP Enterprise, IBM, Supermicro, Kubeflow, and Oracle – all singing the Tesla T4’s praises before the cards have even made it into their systems.