Nvidia and Intel’s “my processor’s better than your processor” quarrel continues… The green team has retaliated in kind to Intel’s attempts to persuade AI boffins its Xeon Scalable server CPUs are paramount artificial intelligence calculators and not Nvidia’s specialised GPUs, after all.

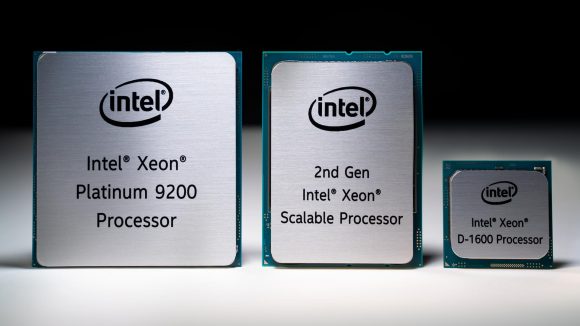

Back on May 13, Intel published an article on its AI developer program site called “Intel CPU outperforms Nvidia GPU on ResNet-50 deep learning inference,” in which it claimed that its Xeon Platinum 9282 chip was capable of 7,878 images per second on ResNet 50. Nvidia’s Tesla V100, the article continues, could only manage 7,844 – that’s going by Nvidia’s own numbers published on its own site.

This would become the first blow in what would become a tit-for-tat foray into whose AI processor is better than whose. Nvidia just responded with its own article, “Intel highlighted why Nvidia Tensor Core GPUs are great for inference”, in which the green team says that “to achieve the performance of a single mainstream Nvidia V100 GPU, Intel combined two power-hungry, highest-end CPUs with an estimated price of $50,000 – $100,000.”

It all comes down to power and performance per processor, according to Nvidia’s article (via The Register). While a two-socket Intel Xeon 9282 may just about scrape past Nvidia’s V100, the GPU requires less power and silicon to do so.

Just want to game? We got you. These are the best graphics cards

Nvidia’s ‘performance per processor’ metric effectively halves Intel’s inference score, while its V100 GPU is also 2.3x more energy efficient than Intel’s dual-socket setup at 300W, the article says. Nvidia’s T4 processors, built upon the same Turing architecture as the RTX 20-series and GTX 16-series (but with all the high-end gubbins), manages power efficiency 7.2x that of Intel at just 70W.

And then Nvidia went a little further… and this is where things get messy.

Nvidia claims that its mini T4 PCIe AI processor is more than 56 times faster than a dual-socket CPU server in the BERT language model, and 240 times more power efficient. But if you look to the table just below in Nvidia’s post, that’s not versus the same Intel dual-socket server from before. No, it’s a dual-socket Intel Xeon Gold 6240 rig. Any half-competitive gamer knows platinum is far better than a puny gold rank.

Then Nvidia strikes again, claiming that its T4 can also give Intel a good kicking during Neural collaborative filtering models (NCF). That little inference machine manages a whopping 10 times performance advantage over Intel this time, and 20 times the energy efficiency. Oh, I forgot to mention that’s versus a single Intel Xeon Gold 6240. Hardly the tit-for-tat face off we were expecting.

“It’s not every day that one of the world’s leading tech companies highlights the benefits of your products,” Nvidia’s blog post reads. “Intel did just that last week, comparing the inference performance of two of their most expensive CPUs to NVIDIA GPUs.”

But maybe it’s not that cut-and-dried, after all. Nvidia’s purpose-built AI inference GPU sure is plenty capable for far less power, but Intel’s sure to retort that its general purpose server chip is also hella good for much more than simply inference – and not to bad at that, either.

It’s rather fun to see these two go at it, irrespective of who’s actually the bees knees for AI. They’re like two punch-drunk boxers slugging it out for our own entertainment – all the while training the machines that will one day doom us all.