[Update] AMD have responded to this article with their own views on the community created benchmark data, stating in no uncertain terms, “Maxwell is not capable of asynchronously executing graphics and compute.” NVIDIA has yet to comment.

[Original Story]

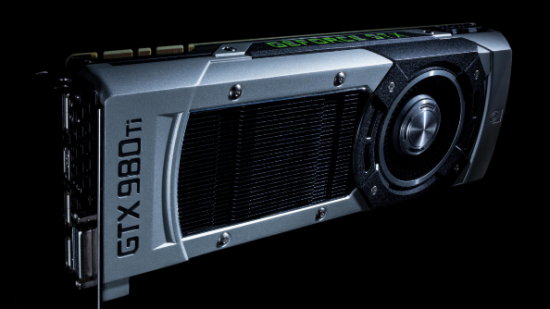

There’s been a bit of a furore lately regarding some comments made by Ashes of the Singularity developers Oxide Games. They claim NVIDIA “put pressure” on them to disable certain settings in their DirectX 12 benchmark, the implication being that those settings would cast Team Green’s GPUs in an inferior light. Oxide also claim that NVIDIA’s Maxwell family of graphics cards can’t handle asynchronous shading, whereas AMD’s latest cards can. But how seriously should you take those claims? Is it time to bin your GTX 980, because a small studio making its first game in a new API says it might not be as good as an AMD card?

It all began when an unnamed developer at Oxide, under the handle Kollock, posted some views regarding NVIDIA hardware performance with Ashes of the Singularity’s DirectX 12 benchmark (via overclock.net). Specifically, Kollock stated that NVIDIA’s Maxwell GPUs aren’t capable of asynchronous compute/shading tasks, and that NVIDIA pressured the studio to disable that feature on their cards:

“Personally, I think one could just as easily make the claim that we were biased toward Nvidia as the only ‘vendor’ specific code is for Nvidia where we had to shutdown async compute. By vendor specific, I mean a case where we look at the Vendor ID and make changes to our rendering path. Curiously, their driver reported this feature was functional but attempting to use it was an unmitigated disaster in terms of performance and conformance so we shut it down on their hardware. As far as I know, Maxwell doesn’t really have Async Compute so I don’t know why their driver was trying to expose that. The only other thing that is different between them is that Nvidia does fall into Tier 2 class binding hardware instead of Tier 3 like AMD which requires a little bit more CPU overhead in D3D12, but I don’t think it ended up being very significant. This isn’t a vendor specific path, as it’s responding to capabilities the driver reports.”

Let’s pump the brakes on this for a second. What exactly is asynchronous compute/shading? In the simplest terms possible, it means your graphics card’s able to queue processing tasks by type (a graphics queue for rendering tasks, a compute queue for physics, lighting, post-processing tasks, a copy queue for simple data transers) and then work through them simultaneously, minimising the amount of time spent idle. It’s about increasing efficiency, and as the last few architecture generations from NVIDIA and AMD have taught us, efficiency is everything. (Here’s an Anandtech deep dive into async shading if you want further detail).

So what Oxide are claiming is that NVIDIA’s Maxwell GPUs are currently way less efficient than their AMD counterparts in DX12 applications. It’s a big deal for both manufacturers, because it suggests AMD has a tangible performance advantage over NVIDIA as PC gaming makes the jump to DX12.

However, the tech specs of both NVIDIA’s Maxwell and AMD’s GCN cards both state support for async compute/shading. Maxwell’s architecture comprises one graphics engine and one shader engine, capable of a command queue 31 tasks deep. GCN’s architecture works differently, featuring one graphics engine and eight shader engines, each with a comand queue 8 tasks deep – that’s 64 simultaneous tasks in total.

That’s what the manufacturers claim, but as we’ve learned over the years it’s prudent not to take those claims as gospel. That’s why the world gives us people like MDolenc, a Beyond3D user who took it upon themself to whip up a bespoke DX12 benchmark to test exactly how Maxwell and GCN graphics cards perform when bombarded with 1-128 sequences of code to digest. The idea being that if NVIDIA’s cards really aren’t capable of async compute/shading in DX12, it would take exponentially longer for that card to process each sequence, from line 1 all the way up to 128. However, if it takes the card the same amount of time to process 31 sequences as just one, that’s an indication that it’s using async compute/shading.

You can read through the results here, and in pretty graph form here. In short: they demonstrate that both architectures are using async shading. NVIDIA cards showed a sharp increasing in processing time beyond 31 sequences, in line with the manufacturers’ claims about their 31-deep command queue. AMD’s results showed very little discrepancy between 1 string of data and 128, but there was a minor increase after 64 – again, in line with the card’s stated command queue.

This isn’t conclusive evidence – it is, after all, a benchmark made by an unaffiliated third party. The results do provide a counterargument to Oxide’s claims though. Moreover, they bring to mind that Ashes of the Singularity, the first DX12 game produced by a small studio using very early DX12 drivers from both manufacturers, probably isn’t conclusive evidence either.

We’ve reached out to both NVIDIA and Oxide for comment, and will update this story as and when they reply.