The shift from standard definition and the halfway house of 720p to 1080p full HD was a big deal. It was a unified effort on the part of TV, console and PC hardware manufacturers to change the way we watched and played our media, and it’s inarguably changed the face of gaming. Several years into a similar effort from manufacturers to encourage the jump from 1080p to ultra-HD, though, what’s the state of 4K+ gaming in 2015 and beyond?

Looking to upgrade your rig? Head over to our GTX 970 group test for handy buyer’s advice.

When Bioshock released in 2007, it brought with it a level of fidelity that allowed Irrational Games’ level designers to tell a story with the environments, rather than simply through dialogue. Rapture’s clearly legible poster, flyers, labels, and the like were a breakthrough moment for a new generation of consoles, and set a bar on PC that no first-person game dare dip below if it wanted to be taken seriously. In short, 1080p changed gaming.

If 4K is capable of a similar transformative moment, we’ve yet to see it. Indeed, many of us are yet to see 4K at all in our homes. Steam’s November hardware survey reports a 0.07% share of its user base gaming at 3840 x 2160, compared with 35.21% gaming at 1920 x 1080. 4K isn’t becoming the new standard for gamers as 1080p has been for several years. It remains, for now at least, a hobbyist proposition.

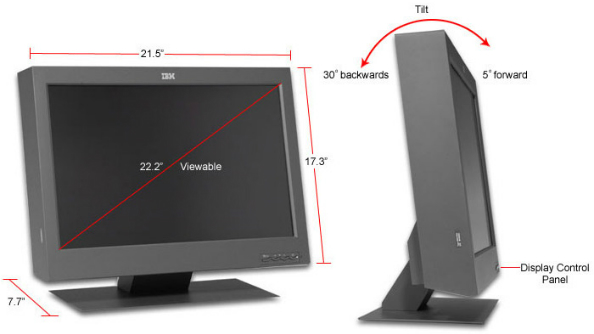

It isn’t a bleeding edge tech standard anymore, either. IBM produced the first 4K production monitor back in 2001; the T220 had a native resolution of 3840 x 2400 on a 22.2-inch screen and became the godfather of ultra-HD displays. It wasn’t until 2012 though that manufacturers began to push 4K to the consumer market, and at that point they ran into several problems.

The first was the message. High definition was a simple concept, but by comparison 4K seems iterative, less revolutionary than its predecessor. “An experience only 4K can deliver,” ran the tagline for the 31-inch Asus PQ321Q, the first commercially available 4K PC monitor back in 2013. Eyes were more likely to be drawn to the £3000 price tag than the 3840 x 2160 resolution though.

Early adopters who weren’t put off by 4K’s mile-high entry fee in 2013 had other problems to deal with. The PQ321Q’s 60Hz refresh rate made it a unicorn in a market otherwise populated by lacklustre 30Hz displays – and even so, it achieved that 60Hz by running two seperate 1920 x 2160 displays side-by-side and ’tiling’ them, which brought about its own problems when attempting to run at lower resolutions. Windows also had a hard time scaling to that ultra-high resolution, which often left users squinting at miniscule icons or enduring out-of-whack aspect ratios.

There’s also the name itself: 4K. 720p is a transparent term, likewise 1080p. But 4K tries to get across something more complex: it’s not a horizontal resolution of 4000p, but rather four times the pixels (8.3 million to 1080p’s 2.7 million). It conveys an image of marketing people rather manically gushing that it’s four times better, without being totally clear what makes that true. There’s heated debate on how to even measure a pixel.

We’re two years in to 4K’s lifespan as a viable gaming standard. There have been great improvements in that time: the price of entry has dropped dramatically to around £350 for a basic panel, Windows doesn’t get quite as befuddled, and graphics cards are better at producing the hundreds of millions of pixels required for smooth gameplay. But there’s a new problem: already, some are looking beyond 4K to higher resolutions still.

Slightly Mad Studios started the trend in April 2015 when they announced that Project Cars would officially support 12K – three 4K panels set up horizontally, displaying simultaneously. You can see footage of the game running at 12K here. Frontier Developments also rolled out support for 16K screenshotting in 2015, and eventual native 16K support, when hardware catches up and can actually run the game under those conditions.

Furthermore, both AMD and NVIDIA have been publicly discussing their efforts to progress to 8K x 16K resolution since 2013. That’s the “perfect” pixel density and scale for the human eye, they claim. It might even represent something of a conclusion to the resolution arms race – there’s no great desire on anyone’s part to go beyond 8K x 16K, because we’re simply not physically equipped to appreciate the fidelity increase beyond that point.

AMD’s chief gaming scientist Richard Huddy told PCR Online back in August: “When you get to 16K, improving on that buys you nothing – that’s kind of a done deal then. But if you think about how much extra horsepower that is, that’s a lot from where we are at the moment.”

Huddy is convinced 8K gaming will become the new standard at some point, but not until the technologies that facilitate it catch up. “It will do, I’ve got no doubt about that, but we’re a little while from it. We’re only just at the stage where we can run a 4K display at a reasonable refresh rate.”

The message that hardware consumers recieve when manufacturers start talking about 8K and 16K adoption is that 4K is an incremental progression from 1080p that will soon be phased out. That being the case, why bother to join the party at this stage? Why not save your money and wait for an 8K panel, with the graphics hardware required to run games at that resolution?

And it turn, the message manufacturers get back from consumers who aren’t adopting 4K is: why bother to develop a higher fidelity display standard if people aren’t buying into the current one? Just about every agency forecast on 4K adoption predicts that it’ll catch on quicker than HD – but that isn’t being reflected in the gaming sphere so far.

Let’s not forget the game developer’s role in this, either. It’s one thing to ‘support’ a particular resolution, and quite another to optimise your game engine so that it performs at 4K and is scalable down to 1080p and below, providing good quality experiences for all. It’s an especially pertinent point in the current climate when many developers are developing across console platforms and PC, where the vast majority of users will be playing at 1080p.

Netflix’s prediction early in the year that both Sony and Microsoft would be introducing 4K-compatible consoles in 2015 fell wide of the mark, but industry murmurs perpetuate the idea that it’ll happen in the near future. At that point, developers may gain more incentive to optimise for 4K. Until then, it’s simply not a priority.

Adam Simonar is studio director at NVYVE Studio, who are currently working on their debut game, P.A.M.E.L.A. “4K support is theoretically something that’s – well, I don’t want to say easy – but it’s something that we can support depending on the user’s hardware,” he tells me.

“For us it ends up being an optimisation challenge, to get the game running reasonably well so that someone with a high end computer could run it at 4K.

“We actually do test periodically, we have a 4K monitor in the studio. Jumping from 1080p to 4K, the main difference maker is the graphics card, so someone’s going to need a high end card. With the game itself we’re aiming for a pretty high visual benchmark so it’s not easy to run [at 4K], but people should be able to if they’ve got a reasonably powerful computer.”

The goal posts for 4K are ever-changing. It relies on a user base who’s willing to buy both a high-end monitor and high-end graphics cards to support it, and it’s already been positioned as a rung on the ladder that ends with 8K x 16K. In the world of TV, it’s doing well – it’s an easier message to sell, and requires a simpler one-time investment. But in the world of PC gaming it’s a niche, vying for space with VR and multi-monitor 1080p setups.

Success is hard to measure, then. We’re in a transitory phase, in which we can’t decide whether we aspire to interact with our games wearing a headset, walking in front of cameras, sitting before a single screen, or many. The deciding factor will be the next game to offer an experience like Bioshock: a game that finds meaning in the new display standard, and explores new opportunities for gameplay within it. Until that game, why go 4K? There isn’t a clear answer.