So, seriously what the f*** is AMD’s new Arcturus GPU for exactly? The starry Arcturus codename has been bandied about for a while now, initially heralded as the next generation of graphics architecture from AMD, but more recently as something more akin to a graphics platform or SoC. Why are we bringing this up again now? Because AMD’s busy Linux bods added support for the Arcturus GL-XL device to the latest Linux patches from Monday July 15.

In fact it seems to have added three distinct PCI device IDs for the Arcturus ‘range’ with three completely different names for each. That’s not the weird part, however… that’s left to the entries about VCRAT, or virtual CRAT, and the GPU cache info which directly relates to the number of compute units (CU) in place. And there it says: “For Arcturus, CU number has been increased from 64 to 128. So the required memory for vcrat also increases.”

That means we’re potentially talking about a set of 7nm Vega-based GPUs with up to 128 compute units inside them. Or, if you want some real mind-blowing numbers… 8,192 GCN cores. I mean… I know they’re Vega cores, but dayyyymmm.

The new Linux patches first came to light via the usual Phoronix-y type places, and has been thrown around Twitter by the usual Komanchi-y people, but what exactly these three new Vega 20-based GPUs might be is still up for debate. Sadly, one thing we are pretty confident about is that this isn’t going to be some monster 7nm graphics chip because Michael Larrabel is reporting that “Arcturus has not 3D engine.”

That means Arcturus is a pure compute device, using all that Vega GCN power for straight number-crunching and not pixel-pushing. AMD did announce that, despite Navi being the latest GPU architecture, it was a gaming-focused design and that Vega and Graphics Core Next was still going to be used in the compute space.

So, again I say, what the f*** is AMD’s new Arcturus GPU? Jacob and I have put our thinking caps on (they’re water-cooled and filled with thermal grease to keep our fevered brains cool) and we’ve come up with some ideas.

AMD Arcturus is Radeon’s ray tracing solution for PS5

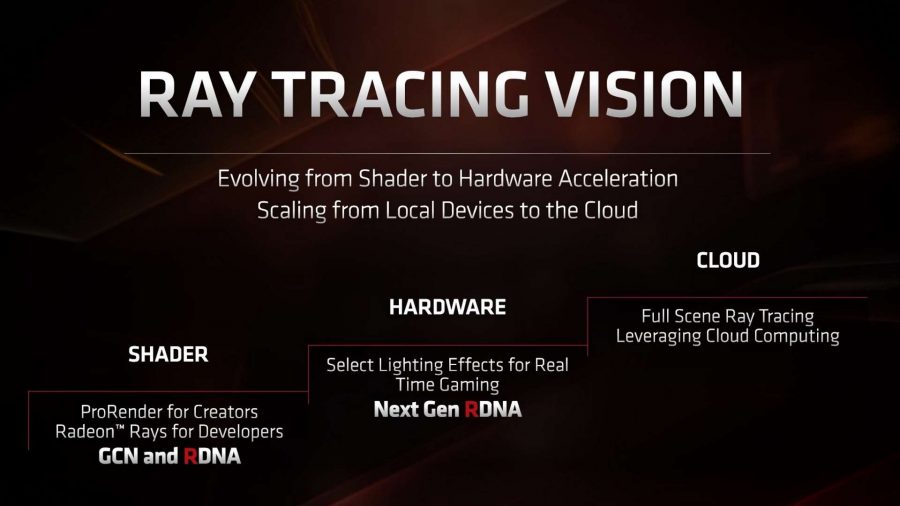

Nvidia has dedicated hardware for real-time ray tracing calculations. AMD does not. So Jacob’s thinking is that this is some sort of dedicated ASIC for pure ray tracing calculations. A core-happy slice of 7nm Vega silicon that can sit alongside a standard 3D-capable graphics chip and crunch the numbers so AMD can deliver DXR-based lighting effects for PC and consoles, maybe?

That’s not impossible. Ray tracing is a compute problem, and the graphics core next tech is much more compute-centric than the newer RDNA design. But an 8,192 core GPU alongside the standard PS5 and Xbox Scarlett Navi/Zen 2 APU? That’s hard to believe. And a ray tracing add-in card for PCs? Even more unlikely.

AMD Arcturus is that future ray tracing in the cloud thing

Around the launch of Navi AMD was at pains to explain that, while the RX 5700 XT et al wouldn’t have any form of hardware-based ray tracing acceleration as part of their make up, the second-gen RDNA chips will do. But its ray tracing vision is about getting “full scene ray tracing leveraging cloud computing” as the eventual goal.

Could Arcturus be that goal made flesh… er, silicon? No. It’s a bit early for that. But the compute-centric, and potentially multi-GPU nature of what Arcturus seems to be could play well with the potential of having the cloud work at rendering the extra lighting effects for games. But we’re a long way from having the infrastructure or super low latency for that sort of computing to be done on the fly in the cloud and seamlessly filtered into your game.

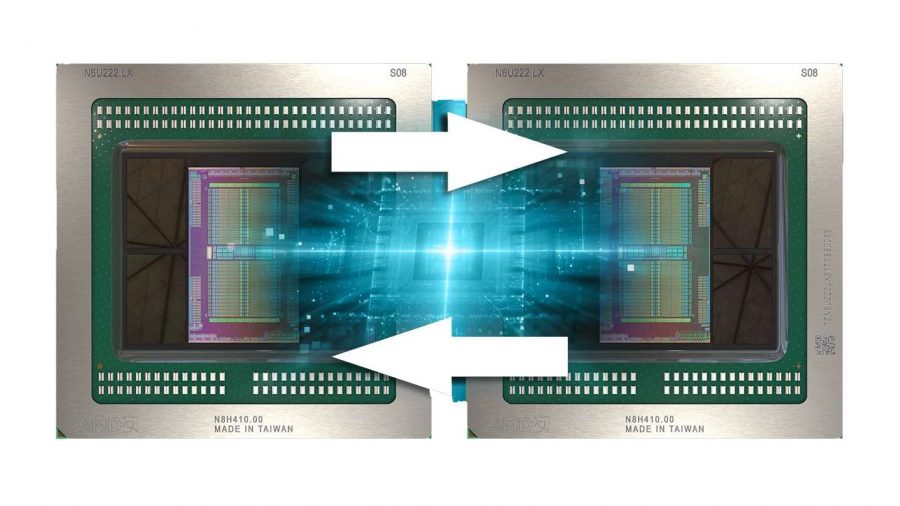

AMD Arcturus is the Radeon Pro Duo for Apple

Them fruity Apple types have got some new dual-core GPUs, right? It’s probably that. Apple likes to have proprietary shiz it can jealously hoard so maybe Arcturus is the name AMD has proffered for its version of the Radeon Pro Vega II Duo. That’s got up to 128 CUs across a pair of Infinity Fabric-linked GPUs and is all about the compute.

But Arcturus seems to reference a single GPU with 128 CUs inside it. And the Radeon Pro Duo needs to have a 3D engine in it, because even though Apple hates gaming – those polo neck jumpers make it impossible – it still needs to be able to display its software somewhere. That, and the PCI device IDs for Arcturus is very different from standard Vega 20 GPUs.

AMD Arcturus is the brains behind Google Stadia

We know that Google’s Stadia streaming service is using AMD GPUs in its backend, and we know that currently those GPUs are Vega-based. The existing silicon is supposedly heavily based on the RX Vega 56, but maybe the Arcturus GPUs represent a potential upgrade of Stadia’s GPU resources just ahead of launch.

There are also potential references for Arcturus and XGMI (inter-chip global memory interconnect) within the new Linux code. XGMI is AMD’s kind-of-NVLink analogue and could be used to connect multiple Arcturus GPUs together to form the sort of instanced graphics pools Google has been touting as the way Stadia will cope with the demands of super-smooth 4K gaming on its system and for future games too.

Again we go back to the lack of a 3D engine on these chips, however, so while the graphics compute stuff could be done on Arcturus it would need to go through another actual GPU to spit out some visuals which would only add in unwelcome latency.

AMD Arcturus is the compute silicon for the Frontier supercomputer

“Frontier will feature custom CPU and GPU technology from AMD and represents the latest achievement on a long list of technology innovations,” said AMD’s Forrest Norrod at the announcement of its goal of creating the world’s most powerful supercomputer in 2021.

Alongside Cray and the Oak Ridge National Laboratory, AMD is creating the custom CPUs and GPUs that will go into the Frontier supercomputer, and maybe the extremely high core count Arcturus GPUs, with their compute focus and lack of 3D engine, would make for the perfect fit with a big ol’ 7,300ft machine. Certainly sticking 8,192 GCN cores on a 7nm die would save space, as opposed to having a pair of GPUs, so it does make sense.

As does it’s potential support for XGMI too. It’s going to need an awful lot of these GPUs inside it to be able to offer “the performance of the top 160 fastest supercomputers in the world combined” after all.

—

Well, those are our considerations for what AMD’s Arcturus could actually be, but if you’ve got any other thoughts or speculative technical expertise you wish to impart, feel free to get in touch. As you can probably tell, we don’t really have a clue…

Join the conversation and let us know what you think via this article’s Facebook and Twitter threads.