Nvidia is all set to launch a whole new generation of graphics cards, but while there is a lot of excitement about all the new graphical goodness they look set to offer there are as many reasons to be worried as there are reasons to be cheerful.

We don’t want to get completely doom and gloom about the new hardware; it’s more than two years since Nvidia has released a whole new gaming GeForce GPU architecture, and we’re genuinely excited about what the new cards are going to offer. And the new RTX 2080 et al are going to deliver a new world of real-time ray tracing, general gaming performance, and the possibility to have artificial intelligence making our games look awesome. Living in the future’s great, right?

But these new GPUs haven’t appeared in isolation and there are reasons to be a little sceptical about just what a brave new dawn the new RTX 20-series GPUs will bring. Whether it’s their offensively high prices, or the possibility that all these shiny new features will be essentially ignored by developers focusing on console development first and foremost.

The new cards represent an Nvidia GPU launch that exists in a world where it has precisely zero competition at the high end of PC gaming graphics, and that has fed directly into this new generation. But this line-in-the-sand moment had to come at some point and it’s probably the best time for Nvidia to change stance, jam its ray tracing flag into the dirt, and declare this the dawn of real-time ray tracing in games.

Whether or not many of us are going to have the chance to ray trace anything until the next generation of RTX GPUs…

Pro

Real-time ray tracing

First off you’ve simply got to be excited about the fact we’re getting real-time ray tracing in gaming hardware released this year and in actual games… this year.

It’s been the holy grail of PC graphics for as long as I can remember, that process of actually simulating the physical properties of light, rather than faking them through rasterization.

Thanks to the new RT Cores, the 20-series cards can accelerate the current industry standard of ray tracing, bounding volume hierarchy. And these accelerators mean the new RTX cards are capable of tracking up to 10 billion rays of light every second.

The GTX 1080 Ti, by comparison, could only track 1 billion. Pathetic.

Con

Super high-price

Okay, so they may be able to manage this wonderful feat of ray tracing – in a few games, and only in select features like shadows and reflections – but, good lord, are they expensive lumps of silicon.

Nvidia’s RTX 2080 Ti has an MSRP of $999, but because it’s doing its Founder’s Edition shenanigans again the Nvidia version is actually on sale for $1,200. And the board partners are likely to only use that as a starting point for their cards. We’ve even heard rumours that Asus is planning a $1,500 version.

Then you have the RTX 2080 Founder’s at $799 and the third-tier RTX 2070 at $599. Yes, a third-tier GeForce graphics card that costs $600. Even at the lower reference card price of $500 it’s frankly ridiculous. And it’s all because Nvidia has no high-end GPU competition so it can price with impunity.

That’s another thing we can blame on Vega… don’t come at me Radeon fanbois, I am, of course, kidding.

Pro

Factory-overclocked Founder’s Edition

Yes, the new cards are ridiculously priced, and yes, Nvidia is pulling the whole Founder’s Edition schtick again, but at least this time there’s a reason behind the higher price of the Nvidia-exclusive cards.

Previous Founder’s cards were just the reference GPUs with plain Nvidia-designed blower fans attached to them. They were simply reference cards with a higher sticker price, and not particularly well-received because of it. But the RTX Founder’s Edition are using factory overclocked GPUs with higher-spec twin axial fans to improve the cooling and keep the noise down.

This is the first time any GPU manufacturer has actually released their own overclocked card as its default version – the reference versions are only going to come from the board partners now. Though it does mean the first reviews will be based on these higher-spec Founder’s Edition cards rather than the traditional, base-level reference designs.

Con

Creaky performance with ray tracing

The thing is, when you’re paying $1,200 for a graphics card you’re well within your rights to expect to play the latest games at 4K resolutions, at the highest settings, at 60fps at least.

But that’s not going to be the case when you throw real time ray tracing into the mix. The performance demands of ray tracing means that your hyper-expensive graphics card may only be capable of running an RTX-enabled game at those speeds at 1080p.

At the recent RTX announcement event I had a chance to play both Shadow of the Tomb Raider and Battlefield 5, both using real-time ray tracing. DICE has aimed specifically to get the RTX version of the game running at 1080p and 60fps, and it has gotten mighty close during our play time with it.

But the latest Tomb Raider wasn’t displaying a whole lot of ray traced shadows and was still only running at between 33fps and 48fps. And that was on the Founder’s Edition of the super powerful RTX 2080 Ti. Ray tracing or no, do you really want to run a high-end graphical feature that tanks your performance so much that you have to play it at 1080p?

Pro

Incredible performance with ray tracing

But we are talking about a seriously high-end graphical feature here. This is frickin’ ray tracing. In real time. The GPU hardware inside the RTX 2080 Ti is tracking 10 billion rays of light every second. 10 BILLION.

The fact that it’s doing all that and is still able to run an intensive game like Battlefield 5 at 1080p, and at a pretty solid-looking 60fps, is actually incredible. Look at where ray tracing was just a year ago. With the top-end GPU of the last generation Battlefield 5 and Tomb Raider would just look like rather pretty slideshows.

I do get that it’s a lot of cash to run your games at 1080p, but you’re physically simulating individual rays of light here, and these are also just the early alpha versions of the games we’re talking about.

And even without the ray tracing shizzle there are a host of rendering improvements built into the Turing GPU that will actually boost performance across the board in traditional rasterized games. Nvidia has posted performance figures showing the RTX 2080 being up to twice as fast as the GTX 1080 when using these new features on top of the performance boost the GPU gives you without them.

Sure, they’re in hand-picked games using DLSS, but even without any of that it’s looking like a gen-on-gen performance hike of about 30-50% based on architecture alone.

Con

adoption rates of RTX tech?

So there are all these rendering improvements, and ray tracing effects, that developers can use to drop into their games. That’s great, but the awkward question is: how many are actually going to spend the dev resources to go to the effort of plumbing them into their games?

Despite the PC being undoubtedly the superior gaming platform, there’s no denying that game devs are targeting the lowest common denominator and developing for the consoles. Those machines don’t have the graphical power to utilise these effects, and they’re running AMD GPUs anyway.

There will be the Gameworks titles which will be encouraged to introduce RTX effects for a small proportion of PC gamers with RTX cards of a sufficient standard to utilise them, but the majority are likely to adopt a more wait and see approach.

So, at least for the next generation of graphics cards, these funky new effects aren’t going to find their way into a lot of games because of the amount of work, and therefore money, it will take for the devs/publishers to squeeze them in there.

Pro

Artificial intelligence aiding gaming

But these new features don’t necessarily have to take a lot of effort for the developers. I got speaking with techs within Nvidia recently and they tell me that some of the new rendering techniques have been dropped into existing games, by the developers, in just five days. That’s not a lot of time to get seriously improved performance.

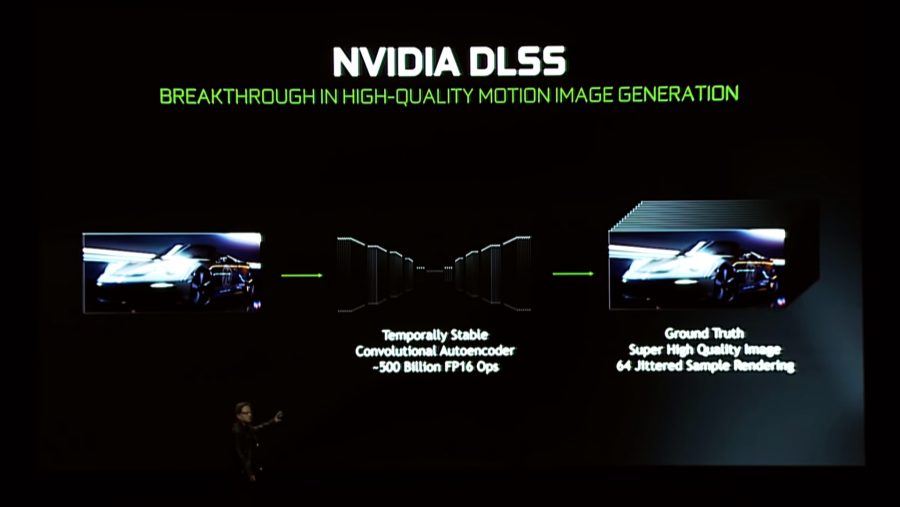

And one of the most intriguing of the performance improving features is this Deep Learning Super Sampling (DLSS) feature. This is a method of improving the visual quality of a game by using Nvidia’s AI power, baked into the Turing GPUs with the AI-focused Tensor Cores.

Using its own supercomputer Nvidia is training its deep learning algorithms to recognise how a high resolution game should look. By feeding the machine millions of images, even from different games, it will then learn how to upscale images on the fly.

Using this method – you download the learned algorithms on a per-game basis in driver releases – you can get another 50% extra performance. Using AI. Because the robot inside your PC knows what a high resolution game should look like.

It’s like some super-smart version of anti-aliasing that simultaneously improves visual fidelity and boosts performance. How cool is that?

Con

Where’s the mainstream RTX?

With the three GPUs Nvidia has launched starting at $500, where does that leave the mainstream versions of the GeForce cards? Is there even going to be an RTX 2060 GPU, and if so is that going to be some $400? Where are we going to get the $250 mainstream graphics cards from?

Given the fact the RTX 2070 is possibly the lowest spec that you can use to achieve real-time ray tracing we’re not entirely sure that Nvidia won’t retain the GTX nomenclature for its lower-end cards.

Either that or we’ll have RTX cards that won’t actually be capable of ray tracing. And they’ll need to then have the Tensor cores baked into them to enable the cards to use DLSS and retain the RTX-enabled schtick, which will make the mainstream, well, not mainstream priced.

And with the reported glut of 10-series GPU still in the channel, it’s likely that any more mainstream 20-series GPU is going to be a long way off, potentially next Spring.

Pro

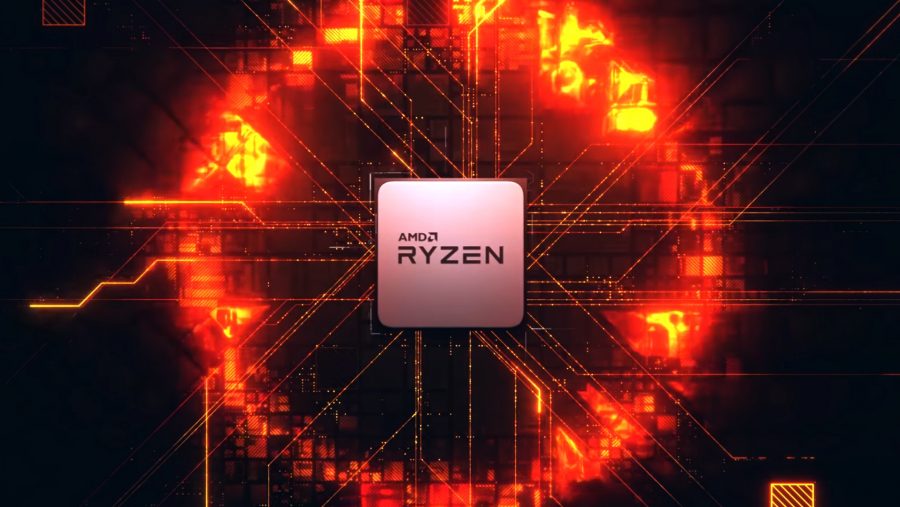

Ray tracing makes high core-count CPUs relevant

We’ve had processors with lots of CPU cores baked into them ever since AMD first went to town with its Ryzen processors. Since then it has doubled down with the second-gen Ryzen chips, and rumours are that next year’s Zen 2 CPUs are going to have even more cores in them. This has forced Intel to follow suit and pretty soon six cores and twelve threads will be the de facto standard for PC gaming.

Well, possibly.

But our games haven’t really been utilising these increased core-counts… until now. Speaking with the technical director at DICE, Christian Holmquist, he told me that it had targeted six-core, 12-thread machines to aid with the demands of real-time ray tracing.

It’s possible that an 8-thread CPU might be able to cope, but it would need significantly higher clock speeds than the 12-thread DICE has been developing to. So it looks like the new graphical demands of the future are going to mean good things for the CPU makers and not just the GPU manufacturers.

That’s got to please at least one half of AMD; it’s excellent multi-core processors are going to be used to even greater effect when paired with high-end Nvidia GPUs.

Con

First-gen pain for early adopters

This is the first generation of almost a whole new way in PC gaming graphics. And that’s always a concern. We’re talking about a new GPU architecture, running game features that no-one has used before in games, with the cards coming out for an insanely high price.

And it’s the early adopters who will be paying the price, and then possibly not seeing a great return because early performance numbers aren’t going to be that high. And then how many developers are actually going to go to the effort of developing specifically for these niche GPUs?

Sure the cards will still be good at traditional rendering, but you’ll be paying the premium for features that you may not be using that often, or maybe even at all. There’s a very good chance that the GTX 1080 Ti will be a much smarter purchase than an RTX 2080, because we’re still not entirely convinced that the Turing card will be able to consistently outpunch the best of the Pascal generation. Especially when there’s a chance you’ll be able to find them much cheaper too.

There has also been a lot of noise of a second generation appearing soon after, with a touted 7nm update coming next year. We don’t necessarily subscribe to that, but there is definitely some potential value in picking up a cheaper, still powerful 10-series GPU, and holding out for the second-gen RTX cards.

So where does that leave the next generation of GeForce graphics cards? Well, they’re certainly intriguing, and potentially offer a tantalising glimpse into what the future holds for the increased visual fidelity of PC games. In the end it’s going to be a question of weighing up whether the performance and enhanced features outweigh the exorbitant cost.

With the advances in PC graphics going on here, the games on your home rig of the next couple of years are going to look possibly very different compared with even the next generation of consoles. They’re not going to get anywhere near real-time ray tracing for a long while yet…

But whatever your feelings right now, wait and see what the independent benchmarks show when the cards are finally in our test bench, because we’ll have the GPUs running soon, and will give you the real numbers as soon as we’re allowed.