Our Verdict

The Nvidia RTX 2080 represents a new dawn in graphics architecture, but without its headline-grabbing ray tracing and AI-based features it’s a very expensive traditional GPU which struggles to justify its high price.

The Nvidia RTX 2080 was the first graphics card to bring us the Turing generation of GeForce GPUs… and it was been a long time coming. But as shiny as the Founders Editions Turing cards are it almost felt wrong to review these RTX GPUs at launch.

That’s because, ever since the Nvidia 20-series was first announced at Gamescom in August – alongside the flagship RTX 2080 Ti – most of the new cards’ hype surrounded the real-time ray tracing and AI performance of the new graphics cards, two things which weren’t available at launch. And so far we’ve only had DICE’s Battlefield V, Metro Exodus, and Shadow of the Tomb Raider delivering any ray traced goodness. So we’ll still have to wait to see the true potential of the Turing architecture fully realised. Fine wine, indeed…

On the whole, though, that means the Nvidia RTX 2080 still needs to rely on its old-school rasterized rendering chops to prove its worth in the doggo-eat-doggo world of high-end graphics cards. Especially given the Radeon VII competition from the AMD side and the fact there are some still-very-competitive GPUs from the old Pascal generation to contend with.

Given the higher sticker price of the 20-series that means realistically it’s going head-to-head with the big daddy of the previous generation, the mighty GTX 1080 Ti. That was the top-spec GeForce card of the Pascal family and, in traditional rendering performance terms, a tough nut to crack. So how well does the new RTX 2080 do?

Nvidia RTX 2080 specs

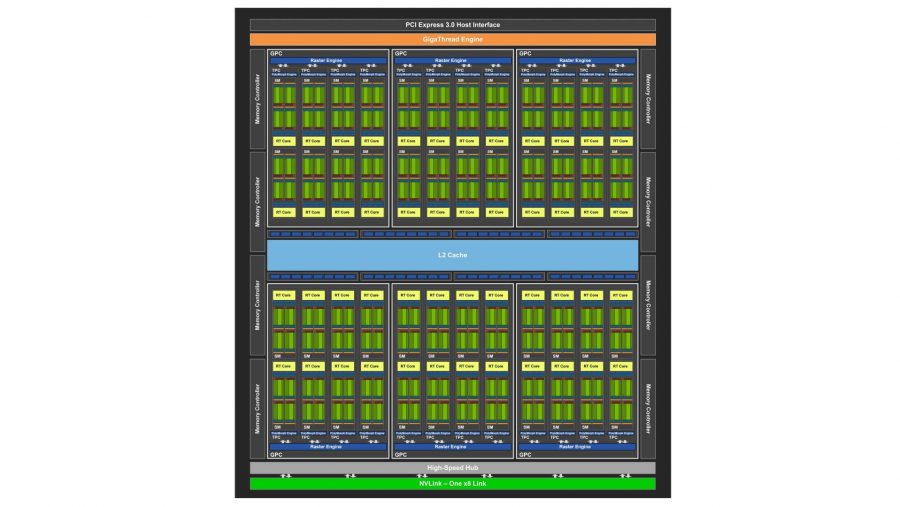

The Founders Edition cards of the new Nvidia Turing generation all sport factory overclocked GPUs with shiny twin axial coolers. That means there is a slight difference in the specs sheet between the reference RTX 2080 and the first cards Nvidia has sent out itself. They still operate the same basic TU104 GPU and that means they’re both rocking some fine-ass silicon inside them.

And some mighty big silicon too. Despite nominally using a smaller production process with the Turing chips, compared with the Pascal GPUs – 12nm vs. 16nm – the RTX 2080 is bigger than the already-pretty-sizeable GP102 of the GTX 1080 Ti. Now, an extra 74mm2 might not sound like a lot, but that’s a fair jump in scale gen-on-gen. And compared with the 314mm2 GP104 inside the GTX 1080 you’re talking about a difference of 231mm2.

| RTX 2080 Ti |

RTX 2080 |

GTX 1080 Ti |

Radeon VII | |

| GPU | TU102 | TU104 | GP102 | Vega 20 |

| Cores | 4,352 | 2,944 | 3,584 | 3,840 |

| RT Cores | 68 | 46 | N/A | N/A |

| Tensor Cores | 544 | 368 | N/A | N/A |

| VRAM | 11GB GDDR6 | 8GB GDDR6 | 11GB GDDR5X | 16GB HBM2 |

| Memory bus | 352-bit | 256-bit | 352-bit | 4,096-bit |

| Memory bandwidth | 616GB/s | 448GB/s | 484GB/s | 1,028GB/s |

| Base clock | 1,350MHz | 1,515MHz | 1,480MHz | 1,515MHz |

| Boost clock | 1,635MHz | 1,800MHz | 1,582MHz | 1,710MHz |

| Dies size | 754mm2 | 545mm2 | 471mm2 | 545mm2 |

| TDP | 250W | 215W | 250W | 215W |

| Price | $1,199 (£1,099) | $700 (£650) | $699 (£699) | $686 (£635) |

In terms of the core-count, the RTX 2080 comes with 2,944 CUDA cores split across 46 streaming multiprocessors (SMs) with one RT Core assigned to each SM. It also comes with 368 Tensor Cores to make with that sweet, sweet AI-based maths.

And with that level of advanced silicon on show in the GPU alone you can start to see why the extra cash is being added to the sale price of the RTX 2080. At $799 ($749) the Founders Edition is an expensive graphics card – more expensive than the GTX 1080 Ti was even at launch – but does have a much bigger chip as well as some pricey new memory to boot.

Yes, this is our first taste of GDDR6 memory, and the RTX 2080 comes with 8GB of the good stuff. It’s running at 14Gbps, which is the standard across the board for all the announced Turing chips, counting the RTX 2080 Ti and RTX 2070 too. That gives the RTX 2080 448GB/s of memory bandwidth, which compares pretty favourably with the 11GB of GDDR5X Nvidia dropped into the equivalently priced GTX 1080 Ti at 484GB/s.

As a factory overclocked version, this Founders Edition card has a slightly higher clock speed than the reference chip. The base clock remains the same for both, at 1,515MHz, but it has a boost clock starting at 1,800MHz. I say ‘starting at’ because the GPU Boost tech inside the Nvidia GPUs essentially makes on-paper clock speed numbers almost irrelevant. The chip will run as high as it can within set temperature and power restraints. Our RTX 2080 comfortably ran between 1,890MHz and 1,905MHz without us having to do any overclocking at all.

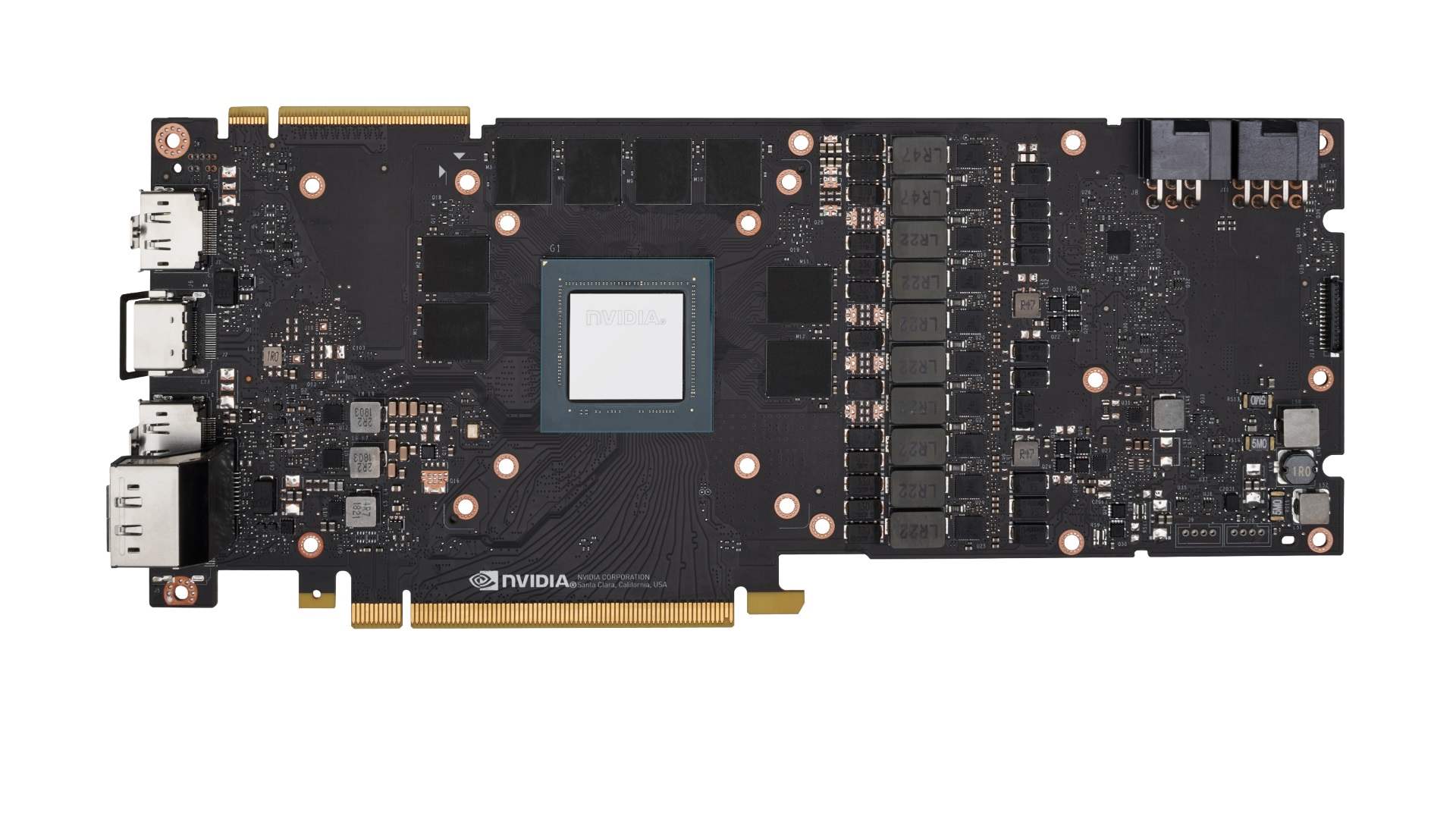

It also comes with a glorious new shroud and cooler. It’s a far more grown-up design compared with the angular Pascal Founders cards, and really does its job too. It’s quiet and keeps the GPU impressive cool. It’s also a smart bit of industrial design as well. We were a little concerned about the increased weight of the card and its potential impact on your long-suffering PCIe slots, but the almost unibody design alleviates a lot of that expected pressure. The rigid body means most of the weight is on the bracket attached to your chassis, keeping things from weighing down the motherboard sockets.

Nvidia RTX 2080 benchmarks

Nvidia RTX 2080 performance

If we’re just talking about a generational performance boost then the RTX 2080 delivers a tangible performance uplift over the GTX 1080 of the Pascal GPU generation. That’s most clear at the 4K level, where at the worst you’re looking at a 20% frame rate boost from the TU104 vs the GP104 GPU.

But in Total War: Warhammer 2 and Far Cry 5 that rises to around 30%, and with Assassin’s Creed Origins you’re looking at a massive 42% boost gen-on-gen. The split though is very clearly drawn along DX11 and DX12 lines, with the older API still proving to be a comfortable place for Nvidia’s graphics architectures.

The performance differential gets smaller as you shift down in resolution terms. At 1440p you still get a relatively healthy perf boost over the GTX 1080, but if you drop down to 1080p then you get as low as 9% with the Total War: Warhammer 2 benchmark. Interestingly the same is true with the RTX 2080 Ti where with some games it’s performing at the same level as much cheaper cards.

But then these aren’t the expensive graphics cards you’re going to buy if you’re still sporting a 1080p display, or at least shouldn’t be. You need to be upgrading your gaming monitor first before spending big on big boy GPUs like these.

That’s just the RTX 2080 versus the GTX 1080, however, which is fine if you’re just looking at it from an architectural point of view, where you’re testing the second-tier GPU of the new Turing generation against the second-tier GPU of Pascal.

That’s kinda how Nvidia wants you to look at the RTX 2080’s performance, but realistically we’ve got to look at the value of the cards, and that means for us the more appropriate tiering structure should be the RTX 2080 vs. the GTX 1080 Ti.

They are both priced at almost the same level, and honestly that’s how people buy graphics cards: on a price/performance level. The RTX 2080 Ti sits on its own, essentially as the untouched, hyper-expensive, ultra-enthusiast Titan-like Turing GPU of this nascent RTX 20-series. But the RTX 2080 is sitting more at the level of the GTX 1080 Ti simply because of the cost involved.

And then you have to now factor in the AMD Radeon VII. The world’s first consumer-level 7nm GPU has been priced and specced directly to take on Nvidia’s RTX 2080, even if only on the rasterised rendering side. And in that it manages to trade blows with the GeForce card across our benchmarking suite. In some titles the 2080 is the faster GPU and in others AMD’s claims of speedier gaming bear fruit.

But on the whole the gaming performance of the RTX 2080 is just about where it needs to be to exist as an upgrade to the GTX 1080 Ti and as a marginally superior card to the Radeon VII. Across the board it’s either obviously faster than the GTX 1080 Ti, or at essentially the same level, and only rarely does the AMD card take the gaming win.

It’s admittedly tight, and if this was all there was to come from the RTX 2080 it would be a tough sell, but there is a path forwards with this Turing architecture. But asking Ti owners to make the move to an RTX 2080 will be tricky.

But with the one-touch overclocking of the new NVScanner integration, into the likes of MSI’s Afterburner and EVGA’s PrecisionX tools, it’s really easy to shift gears and have the RTX 2080 pull comfortably ahead of the GTX 1080 Ti. The level of overclocking potential in the TU104 means that even this pre-overclocked Founders Edition could go further. We had our sample running to around 2,100MHz, with the overclocking application doing all the hard work for us.

That said, the new NVScanner does also work for the 10-series GPUs now, so you can easily overclock your old card if you want to squeeze that last drop of performance out of it before you take the plunge and upgrade.

And what of AMD? Well, the writing was already on the wall with the Pascal generation and these Turing cards really put the best AMD has to offer to the sword. When you’re looking at the price differential between the RTX 2080 and the RX Vega 64 you’re talking about the Nvidia GPU being around 38% more expensive when comparing this Founders Edition card vs. the cheapest Vega 64 we’ve found on Amazon today. And you’re looking at the RTX 2080 sometimes being 50% quicker than the top Radeon card, just about outweighing the extra money you’d have to pay.

Now we have the ray tracing patch added to the latest Windows Update, Nvidia’s drivers, and Battlefield V. It’s our first taste of real-time DXR in the wild, and the first pass from EA and DICE. The Battlefield V devs have committed to improving DXR performance, and we should see a further frame rate adjustment in the coming weeks.

For now, however, it’s working remarkably well. There are multiple settings in-game to change the number of rays you want the game to track, and, while there is a very definite performance hit for enabling DXR, we still had remarkably playable frame rates from 4K ray tracing. And that’s something I didn’t think possible when I first tried the 1080/60 demo at GamesCom this year.

But outside of the pure gaming performance the gorgeous new Founders Edition cooling really comes into play. The new Turing GPUs, under that twin axial fan and almost unibody shroud, are kept incredibly cool. The RTX 2080 especially barely breaks a sweat, maxing out at 72°C throughout testing. Compared with the 85°C or 83°C of the GTX 1080 Ti and RX Vega 64 that’s seriously cool, and that also makes it quiet too.

Nvidia RTX 2080 verdict

This is truly a tricky one. Were we just talking about the RTX 2080 vs. the GTX 1080, on a gen-on-gen level, then it would be a lot easier. The new Turing card comfortably beats the equivalent card of the Pascal generation. But it’s not that easy because it’s not the equivalent card. By virtue of the total shift in pricing tiers the RTX 2080 is priced at around the same level as the old GTX 1080 Ti, which means that’s honestly the more relevant comparative target for this card.

And on that front it’s much, much tighter.

For the most part the RTX 2080 is still the winner, and especially when you throw in the benefit of the one-touch overclocking. It makes hitting higher frequencies and frame rates so easy, so why would you not?

But then there’s the big, be-trunked grey mammal in the room – the actual RTX stuff. At launch we could only really compare the GTX 1080 Ti and RTX 2080 on a traditional rendering performance level, but there are a host of new techniques that can either deliver higher visual fidelity than current cards can offer or even greater performance.

The promise of real-time ray tracing, made real by Battlefield V, makes the RTX 2080 a genuinely intriguing prospect. The RTX 2080 is also probably the most realistic card to pick for it – the RTX 2070 struggles and the RTX 2080 Ti is far too expensive. And then there’s the latent AI power inside the Turing GPU – the dormant Tensor cores can be used to power a new standard of anti-aliasing called Deep Learning Super Sampling (DLSS), which is able to deliver a better visual experience than Temporal Anti Aliasing (TAA) while using the 20-series’ AI power to deliver higher performance.

Which means we’re still talking about waiting for developers to engage with the new Nvidia technology before we actually see the benefit of the impressive new hardware in games on our PCs. And so it feels a lot like an AMD graphics card launch, where we’re waiting for its fine wine to mature. That’s what it was like reviewing the Vega GPUs, and that’s almost how it feels with the Turing cards.

But this time I am at least more confident about seeing those features being used and actually making a difference in the future. We’ve tested demos, and played alpha builds of games using both DLSS and real-time ray tracing, and they’re damned impressive. Having the Star Wars ray tracing demo running on our test rig, with a single RTX 2080, was a real goosebump moment for me. That was a demo that previously needed hundreds of thousands of dollars worth of GPUs to render live. You may not be able to ray trace at 4K too comfortably in this generation, but it’s certainly an impressive start. And it’s only going to improve over this, and subsequent generations.

The Turing pricing, however, reminds me of Vega too, where AMD hamstrung itself with a big chip and pricey HBM2 memory. While it’s probably tempting to think Nvidia is just chucking out silicon with a massive price hike and reaping the benefits, it’s looking like it didn’t have a whole heap of choice but to price them this high. The rumblings are that the margins aren’t that great, and given the size of the TU104 GPU, and the fact that we’ve got expensive GDDR6 memory tied to it, you can start to see why these are such pricey cards.

None of which is going to matter if you sitting there on a GTX 1080 Ti at the moment. You’re not going to upgrade, not right now. The performance boost you’ll get from sticking the RTX 2080 into your system isn’t going to be worth the outlay, at least not until there is sufficient ray traced or DLSS goodness in the wild to justify it.

But what if you’re sporting a standard GTX 1080? Well, you’re probably still going to baulk at spending near $700 on a replacement GPU, if not you’d have already taken the plunge on a GTX 1080 Ti. The performance boost is there, but we’re talking, at best, a 40% frame rate hike for a 70% price bump. And that’s hard to swallow for anyone.

Though maybe waiting for it to mature will make that fine wine more palatable… so be prepared for an almost inevitable re-reviewing as soon as there’s enough AI and ray traced games to make it worthwhile.