Nvidia researchers are training an AI in 3D modelling and rendering. The company is employing machine learning algorithms to train its new neural network to not only understand and categorise objects in images, but turn those 2D images alchemy-like into a 3D model – and all in under 100ms.

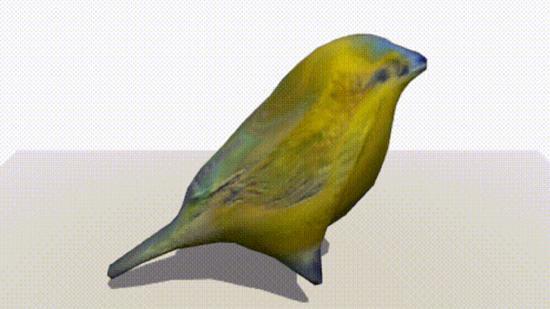

DIB-R, as the rendering framework is known, manipulates a polygon sphere to replicate objects in extracts from images fed to it – essentially moulding a replica out of digital clay via inference. Following a brief crash course in object identifying and rendering, the system is able to mould 3D objects from reference images without prior knowledge of the subject in the blink of an eye.

The encoder-decoder architecture, a type of neural network, has to be trained or taught expansive datasets. Nvidia is keen to tout its V100 GPUs expert ability to do so in just two days, expediting a process that “would take several weeks to train without Nvidia GPUs.” The V100 GPU is built on the Volta architecture – the precursor to the Turing architecture powering some of the best graphics cards for gaming today.

“This is essentially the first time ever that you can take just about any 2D image and predict relevant 3D properties,” Jun Gao, one of a team of researchers who collaborated on DIB-R, says.

The paper titled “Learning to predict 3D objects with an interpolation-based differentiable renderer” [Available here – PDF warning] was published by researchers under the Nvidia umbrella from the University of Toronto, the Vector Institute, McGill University, and Aalto University.

Nvidia’s already set its clever kit on images of birds, but it also hopes to transform images of long extinct animals such as the Tyrannosaurus Rex or Dodo bird. Nvidia’s also applied its 3D modelling AI to rendering models of vehicles – which arguably turned out like the characters from the hit (and run) movie Cars. Baby steps.

Nvidia has previously applied AI models to create super slow-mo interpolated footage, or even fake human faces – just don’t ask it to draw a cat.

While Nvidia’s DIB-R framework is still in its nascent stages, and light years behind what talented 3D artists can create, technology of its kind could have a huge impact on game development and expediting the 3D modelling process – most of all low-poly models in the periphery of a player’s vision.