The first AMD Navi graphics cards launched in July, the first GPUs to house the new RDNA architecture, making them the first since 2012 to replace the ageing Graphics Core Next (GCN) design. But the AMD RX 5700 XT and Radeon RX 5700 cards represent just the vanguard of this new generation, with mainstream RX 5500 GPUs built from the same RDNA building blocks following later this year, potentially as soon as the first two weeks of October if recent leaks are anything to go by.

But what is in this new Radeon DNA architecture that makes it so distinct from the previous generations of GCN silicon that’s been making up the red team’s GPUs for the past seven years? Dr. Lisa Su has told us, on multiple occasions now, that the RDNA architecture is a completely new, ground up design, on a par with the way its CPU designers started afresh with Zen post-Bulldozer et al.

Thanks to the full editor’s day info drop just before the E3 Next Horizon Gaming event in LA, and later white paper release, we’ve got the full low-down on just what makes this new Navi RDNA architecture tick. And the first thing to say is that, arguably, it’s not quite a ground-up redesign, this time around at least…

There are still some great parts to the ol’ GCN design – it’s a fantastic compute engine, for one – so throwing the silicon baby out with the bathwater seems a bit counter-intuitive. Therefore AMD has mostly created this first iteration of the RDNA architecture using GCN building blocks. But the main thing to note is that this is a pseudo-hybrid of the Graphics Core Next design that has been reworked specifically with gaming as its main focus.

That means GCN isn’t dead, but AMD looks to be forking its graphics efforts into one architecture for its compute-based cards, such as the Radeon Instinct range, and a separate RDNA-based design focusing on gaming as its true raison d’etre.

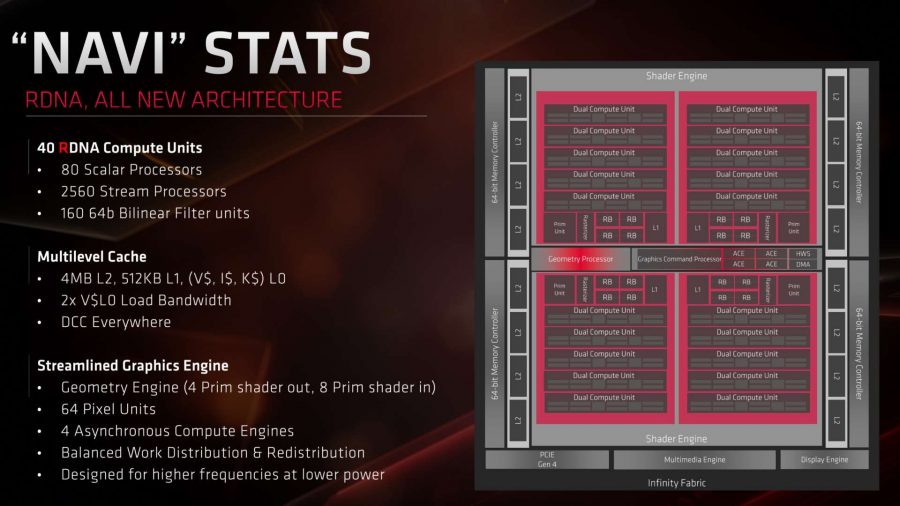

Vital stats

AMD Navi RDNA release date

The first Navi-based RDNA graphics processors were the Navi 10 GPUs baked into both the Radeon RX 5700 XT and RX 5700 released on July 7 2019. Interesting side-note; that’s the same day AMD launched its similarly 7nm Ryzen 3000 processors too.

AMD Navi RDNA specs

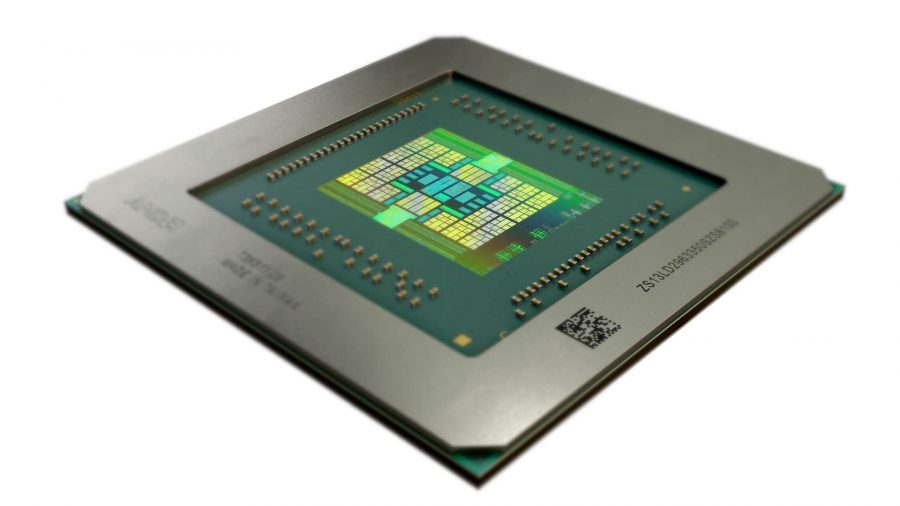

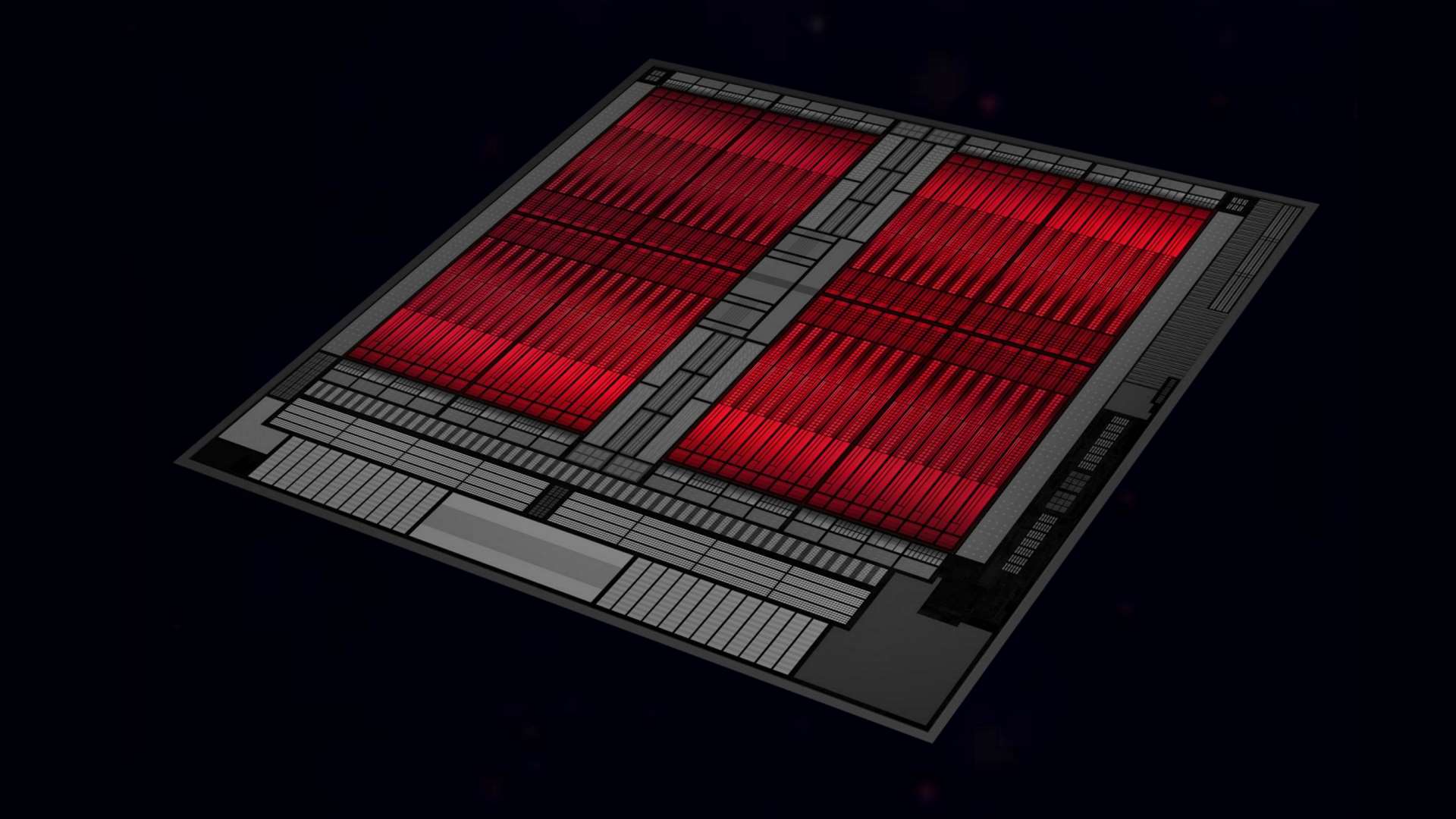

That 7nm Navi 10 GPU was the first RDNA-based graphics chip, and the full core – used by the RX 5700 XT – comes with 40 compute units, and therefore 2,560 cores across two shader engines, with 64 render output units, and 160 texture units. It’s 251mm2 and has a full 10.3bn transistors inside it. Navi has a GDDR6 memory controller, though is compatible with HBM if needed, and has PCIe 4.0 support too.

AMD Navi RDNA architecture

There are a lot of similarities between GCN and RDNA, but the big focus for the RDNA architecture is on reduced latency and improved efficiency specifically for gaming. That means Navi with RDNA is able to do more instructions on each clock cycle, while using less actual silicon. It’s also got a streamlined graphics pipeline and a much-improved cache structure too.

What is the AMD Navi RDNA GPU release date?

The new RX 5700 XT and RX 5700 graphics cards launched with the Navi 10 GPU, in two different configurations, on July 7 this year. This was the first implementation of the RDNA graphics architecture, but definitely won’t be the last because we’ve got Navi 12 and Navi 14 GPUs arriving, potentially in October, to fill out the mainstream level of Radeon GPUs.

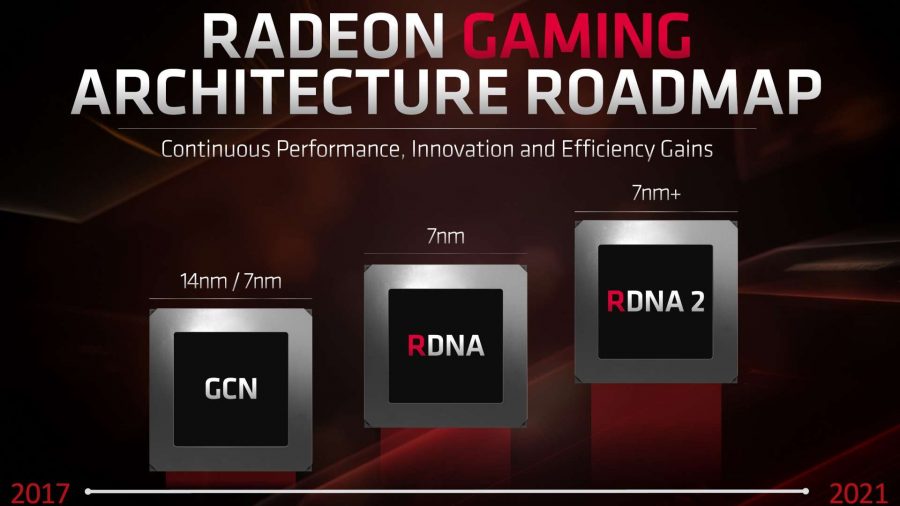

David Wang, senior vp of the Radeon Technologies Group, has explicitly stated that the second generation of RDNA will follow this one, probably next year. The gaming architecture roadmap slide indicates that the 7nm+ RDNA 2 design will arrive before 2021… so 2020, then.

There had already been speculation about a ‘big Navi’ GPU arriving next year, which would be designed to take on the best of Nvidia’s GeForce graphics cards, so the Navi 20 chip could well feature the RDNA 2 architecture.

What are the AMD Navi RDNA GPU specs?

The Navi 10 GPU was the first RDNA graphics processor to implement the new AMD architecture, and its complete specs are being brought to bear on the new Radeon RX 5700 XT card. The RX 5700 features a more cut-down version of the Navi 10 GPU.

The full-fat chip features the TSMC 7nm process technology across 40 RDNA compute units (CUs) delivering 2,560 stream processors or RDNA cores, if you will. The Navi 10 GPU also comes with 64 render output units (or ROPS) and 160 texture units. It also houses four distinct asynchronous compute engines, 4MB L2, and 512kb of L1 cache.

In terms of the actual scale of the GPU you’re looking at a GPU which is just 251mm2. That’s a hell of a shrink when you consider that the 14nm Vega 10 GPU, by comparison, is some 495mm2. Or 486mm2. Oddly it depends who you ask, or which part of AMD’s own slides you look at… some parts of the latest documentation have one figure, and some have the other. Either way, Navi 10 is a lot smaller.

And yet it still packs in 10.3bn transistors, which is still pretty good when compared with the much larger Vega 10 chip’s 12.5bn transistors.

At the moment we don’t know a huge amount about the cut-down Navi GPUs going into the mainstream. The Navi 14 GPU is likely to be the first out the door, and from some leaked benchmark entries it’s going to pack in 24 compute units and therefore 1,536 RDNA cores.

It will support up to 8GB of video memory too, but whether that’s GDDR6 or the cheaper, slightly slower GDDR5 is still up in the air.

What’s new about the AMD Navi RDNA architecture?

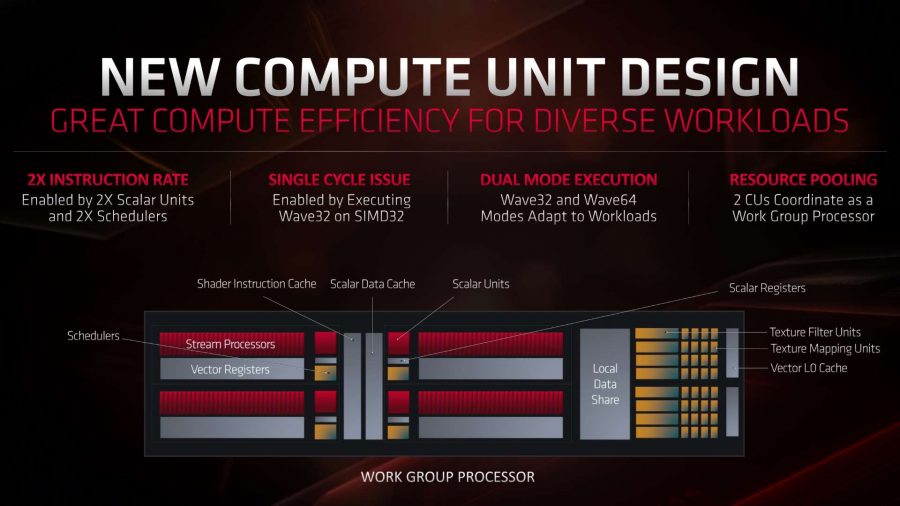

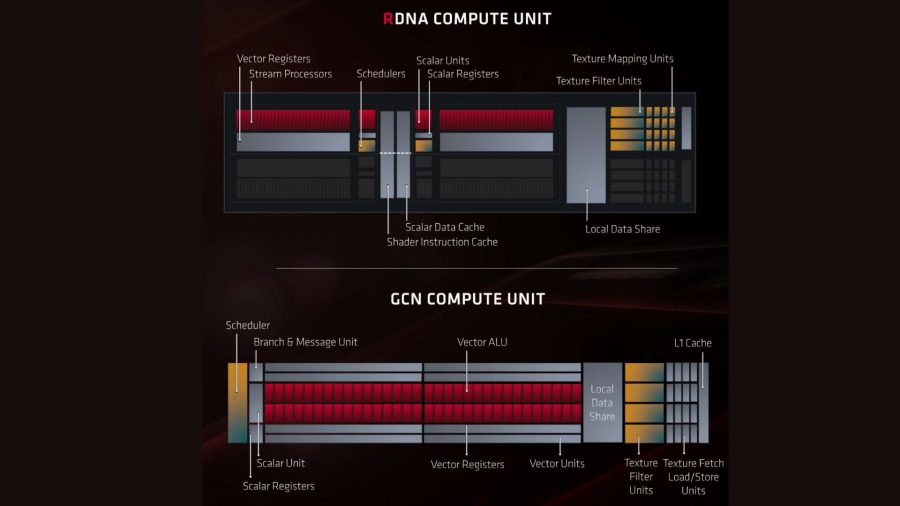

The biggest difference between the last GCN and new RDNA GPU designs, on the surface at least, are the changes AMD has been made to the compute unit. With Vega, AMD introduced the Next-Generation Compute Unit (NCU), but the RDNA Compute Unit steps in to rewrite the rules for a gaming-focused graphics processor.

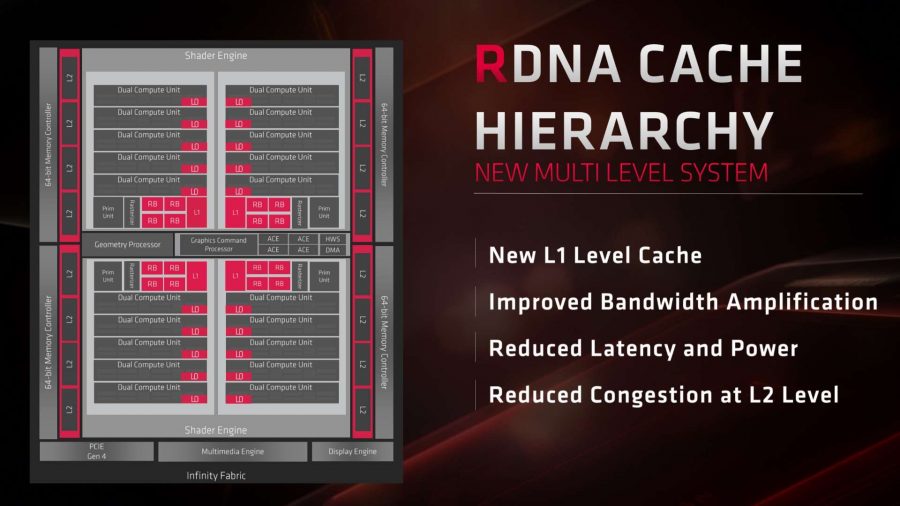

You can pretty quickly see some of the difference between the two architectures from looking at the overlay above. The Vega design has the classic GCN-class individual compute units, housing the stream processors in four batches of eight CUs, across two shader engines. The Navi GPU layout, however, has squeezed the CUs together to create the Dual Compute Unit (DCU).

There are four distinct graphics arrays in the Navi 10 GPU, each with five DCUs inside them sharing resources, split across two shader engines, which all means there are a total of 40 individual CUs across the whole die. Having the compute units configured in pairs, in what AMD is calling a Workgroup processor, allows the two CUs to work together using shared resources to cut down latency while still offering greater parallelism.

If there’s a bit of Bulldozer PTSD creeping in, I understand. The idea of taking discrete parts – in the case of the Bulldozer CPUs, its cores – and lumping them together with shared resources can potentially boost parallelism but risks lessening their individual power.

Thankfully that’s not the case here; each CU is almost entirely distinct but they can share the shader instruction and scalar data caches, as well as the local data share section of the DCU, if they need to work together. In short, this dual compute unit layout shouldn’t impact a discrete CU’s performance.

In fact, the RDNA compute units in Navi actually have more dedicated units inside them compared with a traditional GCN compute unit. Each CU now has one extra scheduler and one extra scalar unit, doubling up their counts on both scores. And that’s specifically to offer double the potential instruction rate in order to create a more efficient compute unit dedicated to standard gaming graphics workloads.

This tailoring to gaming is most obvious in arguably the biggest architectural change of the RDNA architecture: boosted instructions per clock. We’re going to descend even further down into acronym canyon now, but bear with me.

The GCN architecture uses SIMD16 units, a unit that can process 16 instructions at a time. The SIMD nomenclature stands for ‘single instruction, multiple data’ meaning that there are multiple elements performing the same operation on different data points at any one time.

And the four SIMD16 units in an overall GCN execution unit are great at doing complex calculations for scientific applications, but what they not great at is operating particularly efficiently when it comes to gaming workloads. That’s because the four SIMD16 units work on a 4 cycle issue, meaning that they can’t process an instruction in a single clock cycle.

This is where RDNA’s new execution unit design comes into its own for gaming. Instead of four SIMD16 units RDNA uses a pair of SIMD32 with a single clock cycle issue, which allows it to not only deliver higher throughput but it also means more of the Navi GPU is being utilised at any one time, making it far more efficient for graphical demands too.

With Vega specifically there are often times where large parts of the GPU aren’t doing anything when processing game graphics because they’re waiting for an instruction to go through its four cycle process. That’s going to be much less of an issue for Navi.

It’s almost entirely analogous to the way that AMD has been approaching its new Zen 2 architecture. It knows that multi-threaded processing is vital – and even more so for the parallelism required by graphics processors – but really digging down to make your single-threaded performance as good, and as efficient as possible only goes to lift everything else.

AMD went wider with its Zen design, adding more and more cores, and now it’s trying to go faster by pushing the performance of those individual cores. And that’s exactly what the GPU side of the company is doing with the move from Vega’s GCN design to Navi’s RDNA architecture. And it means you don’t necessarily need as many cores to go as fast.

AMD has also improved the cache hierarchy for RDNA and the Navi GPUs too. There’s a new L1 cache design, with the same 16kb per-CU cache, but an extra 128kb of separate L1 cache for each of the four graphics arrays on the chip. Then you have a full 4MB of global L2 cache shared by everything.

This localised extra L1 cache means that the demands on the L2 cache are lessened, offering just that little bit of extra bandwidth within the RDNA system. In fact you’re looking at slightly more bandwidth for the Navi 10 GPU than the full Vega 10 silicon, despite the fact there are only 40 CUs in Navi and 64 in Vega. On a per-CU level, then, Navi’s RDNA architecture offers it significantly increased bandwidth.

And that is quite exciting for what the big Navi core might end up delivering via the Navi 20 and RDNA 2 7nm+ design coming next year.

There are other underlying architectural improvements AMD has made with Navi’s RDNA design, which all factor into the improved gaming performance. It has also streamlined its graphics pipeline to deliver more performance per clock, for example, and has improved the colour compression algorithms, allowing more parts of the chip to actually read and write to that compressed colour data.

But the biggest thing for Navi is that laser focus on ensuring the highest IPC for specific graphics workloads, and having a dedicated gaming GPU architecture – as opposed to one that’s trying to be all things to all people – could really pay off for AMD in the long run.