These are potentially nervy times for AMD’s graphics division. The Nvidia Turing tech has given birth to a new GeForce graphics card generation, and this time it’s coming out, all shaders blazing, promising a new standard in PC gaming fidelity. With the performance gap only set to widen between the Radeon and GeForce camps as these new cards appear, and the promise of real-time ray tracing and deep-learning fixing up your graphics, what the hell can AMD to do in response?

It all started at SIGGRAPH where Nvidia first announced it was indeed unveiling a new GPU architecture with the codename, Turing, and that it was going to be “the greatest leap since the invention of the CUDA GPU.” Bold claims. But the twin pillars Nvidia’s bravado is built on is Microsoft’s DXR API and its own RTX combo of hardware and software. All of which are designed to facilitate real-time ray tracing coming to games this year and beyond.

Then at Gamescom, just a few weeks later, Nvidia showed off Radeon’s new bette noire, the 20-series consumer GPUs, the RTX 2080 Ti, 2080, and 2070.

It’s not just the super demanding real-time ray tracing feature Nvidia is bringing to the table either. No, its work in the field of AI and deep learning is also being brought to bear on some of the most important imagery problems in the world – PC game graphics. Forget automotive safety or medical imaging, this is where it’s at.

Because of that – or maybe partly because it couldn’t be bothered to strip down the professional level Quadro chips to make the GeForce cards – the new 20-series’ GPUs are full of ray tracing and AI silicon. Yet, importantly, they are still offering higher performance in normal gaming terms too.

So AMD needs to pick its battles carefully. With Nvidia having the edge in performance terms team Radeon needs to be smart, and understand, and admit, that it’s not going to be able to compete at the high end of the gaming GPU market this time around.

The AMD Vega GPU was a bust in pure gaming terms – whatever the pre-release hype suggested the fine wine approach isn’t really paying off – but in the mainstream market, ignoring the madness which followed the mining boom, the RX 580 was still the card to go for over the mighty GTX 1060. Partly because of straight performance, but largely because there were times you could find it just undercutting the GeForce competition too.

This area is where the volume cards live too. For all the hype around Nvidia’s top cards it’s the sub-$300 cards which shift units. That means they’re the ones where, if you gauge the price/performance ratios right, you can make a killing.

And with Nvidia seemingly looking to price gouge the living hell out of the GPU market, with the exorbitant pricing of the 20-series cards, it wouldn’t be a surprise for even the mainstream GeForce GPUs, such as the soon to be released RTX 2060, to follow suit with super-high prices too. This is where AMD’s Navi can swoop in and make a real name for itself, by offering a more tantalising price/performance ratio than the green team.

From what we’ve heard from GPU manufacturers, and by virtue of the fact it’s being engineered for Sony’s next PlayStation, Navi is going to be more of a follow up to Polaris than Vega. That means the 7nm GPU, due to launch in the first half of 2019, is going to form the basis for next-gen AMD mainstream cards. And if team Radeon can match the general gaming performance of an RTX 2060 but with a lower sticker price then everyone’s going to want in, and it could sell a boatload.

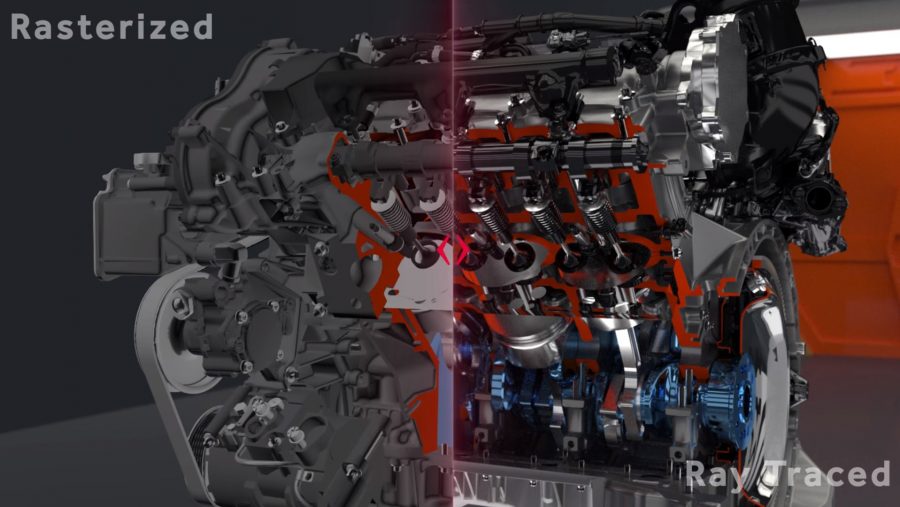

But even if AMD doesn’t have the necessary grunt to power real-time ray tracing with its mainstream GPUs you can’t ignore the fact that ray tracing is a compute problem. AI-powered super-sampling is largely a compute problem. These are not features which are in any way dependent upon which GPU architecture has the most powerful silicon for rasterized rendering.

And AMD’s GCN architecture was originally designed with a specific focus on generating as much compute power with the hardware at its disposal as possible, and as such is capable of a certain level of ray tracing.

As soon as Microsoft announced DirectX Raytracing, and Nvidia planted its flag in the ground at GDC, AMD also declared its Radeon ProRender tech could deliver in real-time for 3D professionals. It did promise to talk more about new rendering techniques to enhance gaming graphics, but so far it still hasn’t published any details about what exactly it’s doing with developers.

That said, its Radeon Rays 2.0 feature, the developer-focused ray tracing technology also spoken about at GDC, has been designed to work with Microsoft’s DXR and focus on the same bounding volume hierarchy technique Nvidia’s RT Cores are dedicated to. Again it’s looking at augmenting rasterized rendering with ray traced lighting and reflections.

As we said, it’s a compute problem, and Radeon Rays specifically accelerates the BVH ray tracing technique… though probably not yet to the extent that dedicated hardware does. But AMD’s plan for the future, laid out at GDC this year, is to try and double down on its compute power to even greater effect by firing fewer actual rays and using denoising techniques to make up the fidelity shortfall.

AMD has also specifically spoken about how using multiple GPUs is something that it’s working towards with Radeon Rays. If it goes back to its old model of creating affordable GPUs to compete at a mainstream level, with a multi-GPU card to compete at the high-end, there’s still a possibility we could see a Radeon gaming card with serious ray tracing potential.

We spoke with AMD’s head graphics guru, David Wang, at Computex this year and he explained that while multi-GPU development was incredibly tough when you’re asking two GPUs to render games, on the compute side it’s much easier. The possibility of utilising the multi-GPU tech to manage the combined compute power of AMD’s future Navi GPUs could make a big difference to its chances of getting the advanced ray tracing feature running on its cards.

We’ll know if team Radeon is planning such a thing for its next-gen gaming cards if anything linking Navi with AMD’s xGMI link is leaked. That’s the Infinity Fabric-based interconnect due to rival Nvidia’s NVLink which is being used in the pro-level 7nm Vega GPU coming this year.

Or maybe AMD should just make like a Disney princess and let it go.

For all the nay-sayers claiming ray tracing is a gimmick technology that’s never going to change the world of PC gaming, that’s what was said about a host of other rendering techniques before they became widely adopted.

We’re of the opinion that ray tracing will become the de facto standard for high-end lighting in PC games. Once the performance struggles have been overcome it will be the simplest way to create high fidelity scenes without developers having to mess around with shadow maps and artificial reflections.

But probably not for a while.

Nvidia has kicked off the first generation of real-time ray tracing hardware, and it’s actually probably a smart move for AMD to let the green team swallow both the pain of the early adopter and the difficulty in fostering an emergent ecosystem. AMD isn’t going to be a leader in ray tracing this generation so it could just focus on traditional rasterized rendering, keep working on its own DirectX Ray tracing implementations in the background, and hit the ground running when the next generation arrives.

With Navi potentially the last AMD GPU architecture to use the old GCN design, a future Radeon graphics design could, and probably should, be more targeted to to focus on generating the compute power necessary for big time AI shenanigans and with a little nod towards real-time ray tracing. Well, unless ray tracing goes the way of 3D Vision and Battlefield 5 is the last time it ever gets used anyway.

All AMD actually has to do with ray tracing this time around is make sure its ProRender tech is in a fit state for the professionals to use with their shiny Macs. It just needs to make sure it allows the turtleneck-wearing masses to do some pseudo real-time professional ray traced rendering, and maybe show off the odd work-in-progress demo to show it’s still on the case on the gaming front.

So, while there might be a little nervous energy around AMD’s Santa Clara HQ at the thought of Nvidia’s new GPUs, it doesn’t have to be all doom and gloom on the Radeon graphics front.

There have been a lot of green-blooded folk claiming the end is nigh for team Radeon with Nvidia seemingly owning real-time ray tracing, but while it’s currently the only company actually showing real hardware running ray traced games at playable frame rates, it’s not an Nvidia-exclusive technology at its heart.

All of the demos that Nvidia showcased at Gamescom have been built using Microsoft’s DXR API. They may be developed and running on Nvidia hardware and use the RTX frameworks, but they’re using a base API that can run on other hardware in the future, should that tech arrive.

So AMD might have to cede the high-end for the next year or so, but this isn’t the end of Radeon, not by a long shot, it’s just the start of another graphics battle.