Well, 2018 has been quite the year for Intel. And if I’m being honest, I don’t mean that in an overwhelmingly positive way. Certainly from a PR point of view things have been very, very difficult for the chip-making monster, with losing a CEO and almost an entire manufacturing process node too. Intel has also lost its place as the biggest semiconductor company on the planet too, with Samsung taking over this year. How very careless…

But that’s not to say that 2018 hasn’t still been a very successful year for Intel from a financial point of view. It’s share price has gone up and down, as the markets will, and has ended up at practically the same place it started the year. Which, considering what a volatile year it’s been for the big tech companies that can almost be considered a win on its own.

Intel has also consistently reporting record revenue figures for three consecutive financial quarters this year, and has been raising its expected 2018 revenue forecast after every quarter’s results have been posted.

So, from a purely financial standpoint it’s been great. From a product, marketing, and PR perspective, however, it’s been a bit of a nightmare. Though it is at least ending the year on a positive note… but how did it all start out?

Intel 2018 review: January – March

It’s got to be said that 2018 had the worst possible start for Intel with the announcement of both the Spectre and Meltdown bugs around the start of the CES show at the start of January.

Admittedly it was bad for a host of tech companies, with AMD CPUs also affected by the security flaw which operated through speculated execution paths, giving malevolent users the ability to read system memory. There were different variants discovered, different flaws unveiled as the year went on, and software fixes rolled out. Future chips have also had hardware mitigations added, but through it all, however, has been the spectre (teehee) of performance degradation issues with any of the mitigations used.

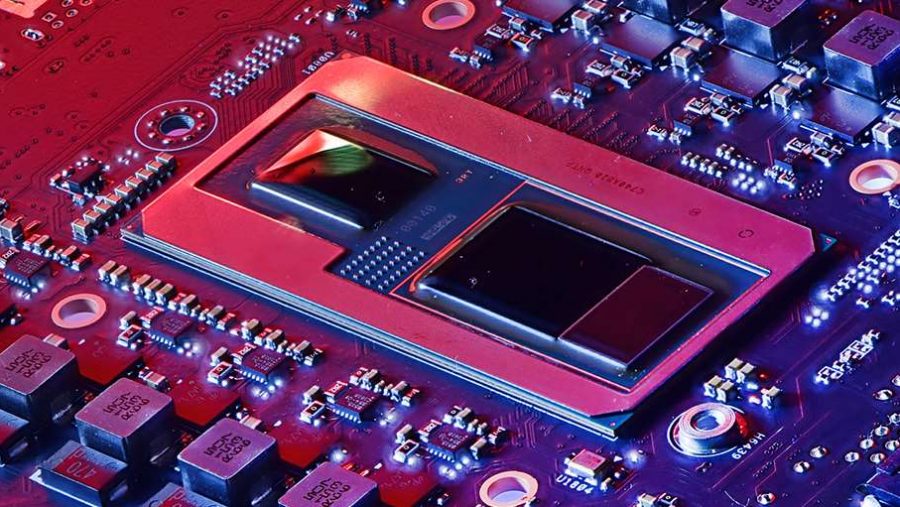

But there was also a little hope in early 2018 too, with Intel announcing the details of the Kaby Lake G CPUs which combined Intel CPUs with AMD’s Vega graphics. Was this the start of a wonderful year filled with intra-company cooperation? A year where all past ills were forgiven and everyone worked together for the greater good?

No, that is not how 2018 will be known.

February saw Intel actually pulling the integrated graphics – its own this time – from a set of processors. Its Cannonlake mobile chips, pretty much the only announced 10nm processors, were leaked with some missing graphics components, reportedly so they could deal with the terrible yields of the 10nm node.

Silicon alternative time: The best CPUs for gaming right now

Then in March Intel released its latest NUC, the Hades Canyon NUC. It was crazy expensive, but held the fastest version of Intel’s AMD collaboration chip inside it which meant it had genuinely impressive gaming performance.

Intel 2018 review: April – June

Ever since Raja Koduri bailed out of AMD, and joined to head up the Core and Visual Computing group at Intel, it had been teasing the prospect of a discrete GPU architecture of the future. April was when we first heard the Arctic Sound codename, suggested to be the name given to the discrete graphics card his team was working on.

But for every ray of light in 2018 came a shower of dirt. And so in April Intel also announced that it was, once again, delaying the volume release of CPUs based on its 10nm production process. Originally set for a late 2018 launch, Brian Krzanich announced it was been kicked back to sometime in 2019. Which later in the year became late 2019.

But it’s not just the 10nm CPUs this affected as first hints that the delay had wider ramifications became clear in May. Intel announced it was putting its H310 chipset on hiatus as it couldn’t manufacture enough 14nm silicon to cope with demand.

Because it hadn’t switched some of its manufacturing over to the 10nm node, and had moved some 22nm production onto the 14nm process, everything needed 14nm silicon. This unprecedented demand proved too much for Intel from May onwards, and is still an issue now.

But June was also a time for celebration. The 40th anniversary of the seminal 8086 chip was marked by Intel releasing the very, very limited edition Core i7 8086K processor, a 5GHz hex-core, 12-thread CPU. It even gave away 8,086 chips in a sweepstake.

AMD in 2018:

Moar corez, faster CPUs, and depressed graphics cards

Nvidia in 2018:

Controversial marketing and a hell of a mining hangover

On the same day it also announced a massive 28-core processor, giving Intel back its core-count lead against the AMD Threadripper opposition. Sadly for Intel that lead lasted less than 24 hours and was also marred by the announcement its presenter had forgotten the live 5GHz demo of the 28-core chip was actually overclocked and not an out-of-the-box frequency, as was hinted at.

June only got worse too, with long-time CEO, Brian Krzanich, being forced to resign over revelations about an extra-marital affair with a fellow employee. It was before his time as CEO, but Intel takes a dim view of such things and Krzanich had to go. Robert Swan took over immediately as interim CEO, and is still there. Loving life. Probably.

Intel 2018 review: July – September

July was mercifully quiet for Intel, but things started heating up in August as it announced the first consumer QLC SSD, the 660p. The cheaper NAND flash meant the 512GB version was just $100, an absolute bargain at a time where SSD prices were being pushed ever higher by memory prices.

August also saw Intel with its first official tease of the discrete GPU set to arrive in 2020, promising to “set our graphics free.” We still haven’t heard much about the project, but Intel has repeatedly promised a 2020 launch, so that seems carved in stone. Until it inevitably slips, obviously.

September saw JP Morgan claim that Intel’s CPU shortage was worsening throughout the year, which potentially helped prompt the company into a sharp response. A response to the tune of an extra $1bn to help shore up its manufacturing deficiencies.

But finally Intel was able to unveil a consumer desktop CPU matching AMD’s eight-core, 16-thread configuration, and announced the “world’s best gaming processor” with a whole host of independent performance benchmarks to prove it.

I’ll admit, sitting at the event in New York I took the benchmarks at face value, but it later transpired the company responsible had been inadvertently hobbling the competing AMD CPU, undeniably skewing the results.

The Core i9 9900K was released, and is the fastest gaming CPU around, but its super high price means that it struggles to really make itself a worthwhile opponent for the similarly specced, and far cheaper, AMD Ryzen 7 2700X.

Intel 2018 review: October – December

In October serial Intel-baiter Charlie Demerjian published a story claiming Intel was killing off its original 10nm node. This prompted Intel into an almost immediate response – something it generally doesn’t do – to say that there was nothing wrong with 10nm, it was all fine, and it was “making good progress.”

It was then announced Intel was cutting gaming CPU supply to the DIY and system integrators over Q4, traditionally a busy time for all concerned. Again, it was all down to the 14nm shortage linked to the delay in the 10nm production process.

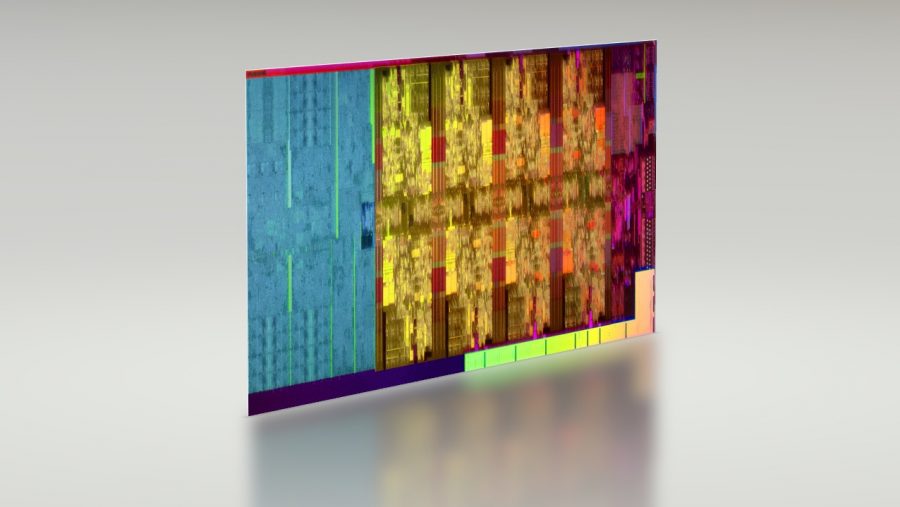

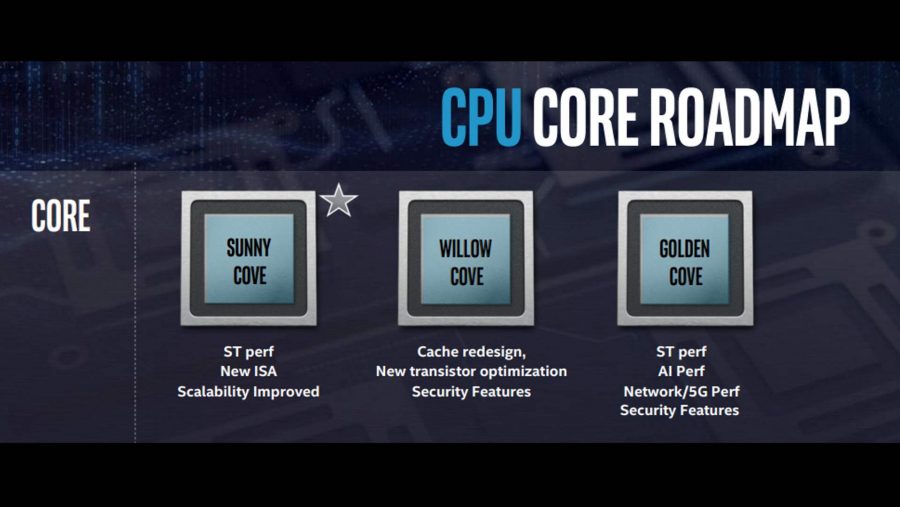

Cut to December and Intel announces the new Sunny Cove CPU microarchitecture on a new 10nm node. This all happened at the Intel Architecture day, where Murthy Renduchintala is reported to have said Intel has “humble pie to eat right now, and we’re eating it. My view on 10nm is that brilliant engineers took a risk, and now they’re retracing their steps and getting it right.”

Which does kind of suggest Intel did halt work on its previous 10nm node, making its engineers go back and try and fix the problems that got them to that stage. I mean, whatever happened to Ice Lake? It doesn’t look like that codename’s going to make it down to the desktop, stopping at the Ice Lake Xeons announced in the summer. The lakes are over and we’re moving into the desktop Cove era next year.

But Intel has at least finished the year on a positive note, announcing its new CPU roadmap as well as the name of its upcoming discrete GPU architecture, Intel Xe. Or Intel Xe. We’re not entirely sure which, though it’s probably pronounced the same. And is only a short hop, skip, and a jump from being called the Intel Xen as a bit of AMD Zen baiting too. Missed opportunity there…